Category: Data Center

Data Center Interconnect (DCI) encryption

Brad sent me an interesting DCI encryption question a while ago. Our discussion started with:

We have a pair of 10GbE links between our data centers. We talked to a hardware encryption vendor who told us our L3 EIGRP DCI could not be used and we would have to convert it to a pure Layer 2 link. This doesn't make sense to me as our hand-off into the carrier network is 10GbE; couldn't we just insert the Ethernet encryptor as a "transparent" device connected to our routed port ?

The whole thing obviously started as a layering confusion. Brad is routing traffic between his data centers (the long-distance vMotion demon hasn’t visited his server admins yet), so he’s talking about L3 DCI.

The encryptor vendor has a different perspective and sent him the following requirements:

Multi-chassis Link Aggregation: Stacking on Steroids

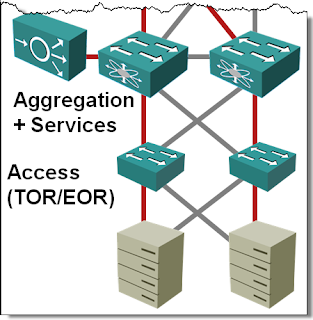

In the Multi-chassis Link Aggregation (MLAG) Basics post I’ve described how you can use (usually vendor-proprietary) technologies to bundle links connected to two upstream switches into a single logical channel, bypassing the Spanning Tree Protocol (STP) port blocking. While every vendor takes a different approach to MLAG, there are only a few architectures that you’ll see. Let’s start with the most obvious one: stacking on steroids.

PFC/ETS and storage traffic: the real story

Data Center Ethernet (or DCB or CEE, depending on who you are) is a hot story these days and it’s no wonder that misconceptions galore. However, when I hear several CCIEs I highly respect talk about “Priority Flow Control can be used to stop all the other traffic when storage needs more bandwidth”, I get worried. Exactly the opposite is true: you use PFC to stop the overzealous storage traffic (primarily FCoE, but also iSCSI) to make sure you don’t drop it.

… updated on Sunday, May 8, 2022 09:21 UTC

Multi-Chassis Link Aggregation (MLAG) Basics

If you ask any networking engineer building layer-2 fabrics the traditional way about his worst pains, I’m positive Spanning Tree Protocol (STP) will be very high on the shortlist. In a well-designed fully redundant hierarchical bridged network where every device connects to at least two devices higher in the hierarchy, you lose half the bandwidth to STP loop prevention whims.

Introduction to 802.1Qaz (Enhanced Transmission Selection – ETS)

Enhanced Transmission Selection (ETS) is the second part of the Data Center Bridging puzzle (I’ve already described Priority Flow Control). It specifies two different technologies:

- Queuing mechanisms in bridges

- Data Center Bridging eXchange protocol: a Control/Negotiation protocol that allows bridges and hosts to negotiate QoS parameters in a bridged network.

Although some bridges from some vendors supported numerous QoS mechanisms in the past, 802.1Qaz is the first attempt to standardize a richer set of QoS behaviors than the strict priority queuing defined in 802.1p.

Multihop FCoE 102: VN_port proxy and FIP snooping

A few weeks ago I wrote about the multihop FCoE basics and the two fundamentally different ways an FCoE network could be designed: FCoE on every switch or FCoE on the edges with DCB-extended bridging in the middle.

There are two other configurations you’ll likely see in access parts of an FCoE network: FCoE VN_port proxying and FIP snooping.

ATAoE for Converged Data Center Networks? No Way

When I started writing about storage industry and its attempts to tweak Ethernet to its needs, someone mentioned ATAoE. I read the ATAoE Wikipedia article and concluded that this dinky technology probably makes sense in a small home office… and then I’ve stumbled across an article in The Register that claimed you could run a 9000-user Exchange server on ATAoE storage. It was time to deep-dive into this “interesting” L2+7 protocol. As expected, there are numerous good reasons you won’t hear about ATAoE in my Data Center 3.0 for Networking Engineers webinar.

Storage networking is like SNA

I’m writing this post while travelling to the Net Field Day 2010, the successor to the awesome Tech Field Day 2010 during which the FCoTR technology was launched. It’s thus only fair to extend that fantastic merger of two technologies we all love, look at the bigger picture and compare storage networking with SNA.

Notes:

- If you’re too young to understand what I’m talking about, don’t worry. Yes, you’ve missed all the beauties of RSRB/DLSw, CIP, APPN/APPI and the likes, but major technology shifts happen every other decade or so, so you’ll be able to use FC/FCoE/iSCSI analogies the next time (and look like a dinosaur to the rookies). Make sure, though, that you read the summary.

- I’ll use present tense throughout the post when comparing both environments although SNA should be mostly history by now.

Long-distance vMotion and the traffic trombone

Few days ago I wrote about the impact of vMotion on a Data Center network and the traffic flow issues. Now let’s walk through what happens when you move a running virtual machine (VM) between two data centers (long-distance vMotion). Imagine we’re moving a web server that is:

- Serving a few Internet clients (with firewall/NAT and/or load balancing somewhere in the path);

- Getting most of its data from a database server sitting nearby;

- Reading and writing to a local disk.

The traffic flows are shown in the following diagram:

vMotion: an elephant in the Data Center room

A while ago I had a chat with a fellow CCIE (working in a large enterprise network with reasonably-sized Data Center) and briefly described vMotion to him. His response: “Interesting, I didn’t know that.” ... and “Ouch” a few seconds later as he realized what vMotion means from bandwidth consumption and routing perspectives. Before going into the painful details, let’s cover the basics.