Goodbye, Leaf-and-Spine Networks?

Of course not

A friend of mine sent me links to a new paper published by AWS engineers, and an associated LinkedIn post which claims:

We got lean, resilient, massive aggregation fabrics that provide 33% better throughput with 69% fewer routers, savings 27% of costs, cutting power usage by 40%, and reducing CO2 emissions.

The obvious question one should ask after reading the hyperventilated Radical Network Redesign blog post is thus: is this the end of leaf-and-spine networks? Of course not. Let’s go into the details.

Worth Reading: Genie Tarpit

Following a link in Martin Fowler’s Fragments, I stumbled upon Genie Tarpit by Kent Beck – a perfect summary of my experiences with AI coding (code reviews are OK, new code less so). He also provided a good reason for that behavior:

The “plausible deniability” task orientation of the genie leaves it claiming success even though the code doesn’t work at all.

And the proposed solution?

You probably saw this one coming—nobody knows.

netlab 26.06: OSPFv3 on FortiOS, MPLS/VPN on SR Linux

netlab release 26.06 adds OSPFv3 support on FortiOS (by @a-v-popov) and MPLS/VPN support on SR Linux. We also ensured the installation scripts work on Ubuntu 26.04 (everything else was OK) and updated the installed Vagrant version to 2.4.9 (we’re not using new Vagrant features; you don’t have to upgrade it in an existing installation).

Other than that, we added a few improvements and squashed a number of bugs.

Upgrading or Starting from Scratch?

- To upgrade your netlab installation, execute

pip3 install --upgrade networklab. - New to netlab? Start with the Getting Started document and the installation guide.

- Need help? Open a discussion or an issue in netlab GitHub repository.

Lab: Implementing VRF-Lite with VXLAN

Did you know that you can implement a VRF-Lite design with VXLAN? All you need are devices that can run VRF routing protocols over VXLAN-backed VLAN segments.

Compared to the “traditional” VRF-Lite design, in which you need a set of VLANs on every link and every device running the routing protocol for every VRF, the VXLAN-based design needs just IP routing on the core switches, resulting in a design that’s pretty close to what we were building with DMVPN (without IPsec and NHRP complications).

Using netlab to Argue with Vendor TAC

A happy netlab user sent me an unexpected use case: they successfully used its multi-vendor capabilities to argue with a vendor TAC. Here’s the gist of the story (edited/anonymized for obvious reasons):

EVPN Centralized Routing with Arista EOS

TL&DR: SIP of Networking Was an Understatement 🤦♂️

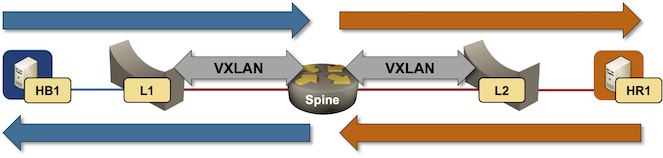

A month ago, I described ARP issues in EVPN centralized routing design, and Naveen Kumar Devaraj was kind enough to add some Arista EOS implementation details. Today, let’s explore what EVPN routes Arista EOS generates in that scenario. We’ll use a very simple lab topology with a spine switch acting as a router. The leaf switches are layer-2 switches.

Packet forwarding in centralized routing design

Worth Reading: Building the Tools I Wished I Had

Tony Mattke built several networking-focused CLI tools and released them on GitHub. You might find them useful.

SR-MPLS over Unnumbered Interfaces

After the simple SR-MPLS demo and the dual-stack SR-MPLS setup, it was time for the next obvious question: Does SR-MPLS work over unnumbered IPv4 interfaces1, assuming the implementation of the underlying routing protocol supports them? Of course it does; let’s go through the details, using the same topology I used throughout the Segment Routing workshop @ ITNOG10.

130 Years of Wireless Communications

Here’s a short glimpse into the history of telecommunications: in a building at the top of this mountain (barely noticeable blip across the saddle from the radio tower; search for Capo Figari for more details), Guglielmo Marconi conducted experiments in the ~1930s (after inventing the wireless telegraph system in the late 1890s).

The original radio could “transmit” at most 40-60 words per minute (the limit of a skilled Morse Code operator). 130 years later, I’m writing this blog post using a 200 Mbps Internet connection via a low-earth-orbit satellite with response times low enough that I can run an interactive SSH session with no noticeable delay. It’s almost incomprehensible how far we’ve come in such a short time.

Worth Reading: Ephemeral BGP Leaks

Doug Madory wrote an interesting article (published on APNIC blog) arguing that we shouldn’t worry about ephemeral BGP leaks that can be observed only during the BGP path hunting process that follows a route withdrawal.

I have to disagree with that. It’s never a good idea to ignore a dead canary in the coal mine.

While the ephemeral leaks do not impact the end result (after all, the route is gone), they are an important indicator of the lack of BGP route policy enforcement in the autonomous systems that propagate them. If an autonomous system is propagating a bogus route when no better routes are available, it’s equally likely to propagate a bogus route when an intruder manages to inject it.