EVB (802.1Qbg) – the S component

The Edge Virtual Bridging (EVB; 802.1Qbg) standard solves two important layer-2-based virtualization issues:

- Automatic provisioning of access switches based on hypervisor-signaled information (discussed in the EVB eases VLAN configuration pains article)

- Multiplexing of multiple logical 802.1Q links over a single physical link.

Logical link multiplexing might seem a solution in search of a problem until you discover that VMware-related design documents usually recommend using 6 to 10 NICs per server – an approach that either wastes switch ports or is hard to implement with blade servers’ mezzanine cards (due to limited number of backplane connections).

Cisco was the first vendor that decided to use NIC virtualization to keep VMware happy – the Palo adapter can create up to 128 virtual NICs on the same physical silicon (and 2 10GE uplinks give you plenty of bandwidth and uplink redundancy). The Palo adapter uses proprietary VN-tag encapsulation, which Cisco promises will be replaced by 802.1Qbh.

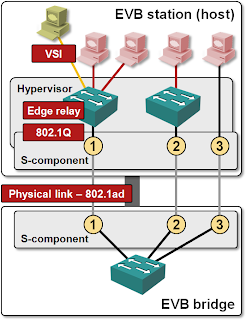

The S component of the EVB standard tries to solve the same problem using 802.1ad (Q-in-Q) encapsulation to allow EVB to run on existing hardware. The outer 802.1ad VLAN tag is used to indicate the logical link; the edge relay (virtual switch) can use the inner VLAN tag to provider 802.1Q-capable bridging functionality to the virtual machines. You can also connect a virtual machine’s NIC (Virtual Station Interface – VSI) directly to the S-component, for example in a hypervisor bypass (VMdirect) scenario.

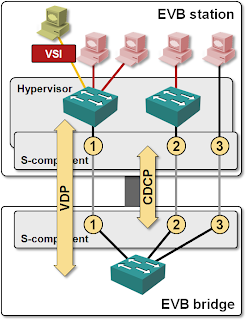

EVB (802.1Qbg) standard also specifies S-Channel Discovery and Configuration Protocol (CDCP) that allows the hypervisor host (EVB station) to create on-demand S-channels with the access switch (EVB bridge).

Does S-component of EVB make sense today?

As I explained in the EVB eases VLAN configuration pains article, it’s very hard to implement Virtual Ethernet Bridge (VEB) and VSI Discovery Protocol (VDP) without the hypervisor support. You can, however, implement the S-component and CDCP within BIOS/NIC firmware without the hypervisor support. EVB-compliant switches and NICs could thus provide an alternative to Cisco’s Palo/VN-Tag.

EVB (802.1Qbg) or VN-Tag/802.1Qbh?

EVB’s S-component’s only advantage is its reliance on 802.1ad, making it implementable with existing 802.1ad-compliant hardware. Disregarding the legacy perspective, VN-Tag/802.1Qbh has two clear advantages:

802.1Qbh uses its own encapsulation scheme and thus provides totally transparent point-to-point Ethernet channel; you can run 802.1Q, 802.1ad or 802.1ah on top of 802.1Qbh. 802.1Qbg provides 802.1Q-compliant logical channels.

802.1Qbh supports multicast replication between logical links. 802.1Qbg supports multicast replication only within the hypervisor switch; when the access switch has to send the same multicast/broadcast packet across more than one logical link, it has to send multiple copies of the same packet.

A full-blown EVB with VEB/VEPA/VDP hypervisor support would be a clear winner despite the S-component limitations due to tight hypervisor/access switch integration it provides.

Quick reality check: apart from vague “we will provide EVB support once the standard is ratified” statements, I haven’t heard anything about EVB-compliant hypervisors yet.