Azure Networking 101

A few weeks ago I described the basics of AWS networking, now it’s time to describe how different Azure is.

As always, it would be best to watch my Azure Networking webinar to get the details. This blog post is the abridged CliffsNotes version of the webinar (and here’s the reason I won’t write a similar blog post for other public clouds ;).

High-Level Perspective

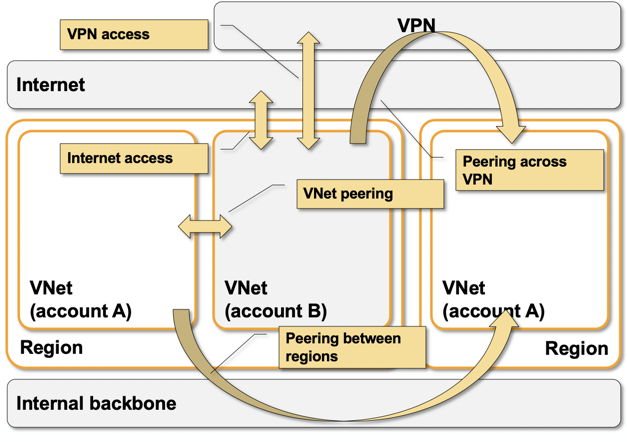

- Azure virtual networking is implemented with isolated Virtual Networks (VNets) that are always connected to outside world through Internet gateways.

- A VNet can also be connected to another VNet through VNet peering or VPN connection.

- You can use Virtual Network Gateway (VNG) to connect a VNet (or a bunch of peered VNets) to the rest of your network through VPN- or ExpressRoute (leased line) connections.

- VNet is limited to an Azure region.

- Every VNet has one or more subnets. Subnets can span availability zones (major difference with AWS), making it a bit harder to create application swimlanes.

Some Azure VNet external connectivity options

VNet Packet Forwarding Overview

Even though public cloud networking is just networking, it’s usually different from what you’d expect, and Azure is definitely going into the quite different direction.

- VNets supports unicast IPv4 and IPv6 packet forwarding. IP multicast is not supported.

- VM IP and MAC addresses are controlled by the orchestration system.

- MAC address is passed to a VM as a (virtual) hardware parameter,

- DHCP is used to pass IPv4 and IPv6 addresses configured in the orchestration system to the VMs.

- Packets are forwarded exclusively based on IPv4/IPv6 addresses. There is no L2 forwarding in Azure (major difference with AWS).

- Even though packets to- and from VM instances look like Ethernet packets, forwarding based on destination MAC address does not work.

- ARP requests return meaningless data - all hosts in the same subnet seem to have the same MAC address (MAC address of Azure virtual router).

- Packet forwarding is based on the IP addresses configured in the orchestration system and can be influenced with per-subnet route tables

- Route tables are attached to individual subnets and can contain any prefix (including intra-VNet prefixes - major difference with AWS).

- The next hop in a route table entry could be Internet, None (drop it), Virtual Network Gateway, Virtual Appliance (an IP address of a VM - difference with AWS), or VnetLocal (send directly to destination instance).

- Route tables can be populated through the orchestration system or via external (VPN or ExpressRoute) BGP sessions.

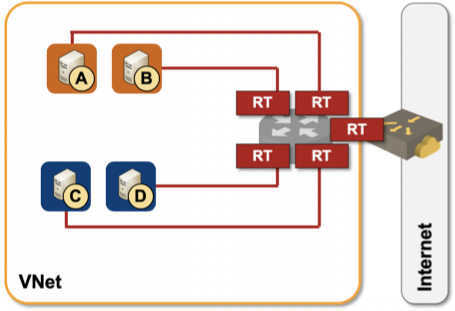

VM NICs are connected straight to Azure router

Consequences:

- More-specific prefixes in route tables allow you to influence intra-VNet packet delivery. It’s relatively easy (and confusing) to use route tables to set up service chaining to insert virtual network appliances in the packet forwarding path.

- Changing an IP or a MAC address in a VM usually results in a disconnected VM.

- First-hop routing protocols like HSRP or VRRP don’t work. Changing a MAC address of a VM will just disconnect it.

- The only way to pass an IP address from one VM to another is through an orchestration system call. The usual GARP tricks don’t work.

- Taking over an IP address of a failed instance probably changes packet forwarding behavior, but don’t expect it to be fast. The only way to get decently-fast failover is to use an Azure load balancer.

- You cannot run a routing protocol between your instance and Azure router. You could either use orchestration system to modify the subnet route table(s) based on VM instance route tables, or run BGP with a VPN gateway in another VNet (see the corresponding section of AWS Networking 101 blog post for details).

- You could use a unicast-based routing protocol between VMs in the same subnet, but the routes derived from the routing protocol would be ignored by the Azure router, so packet forwarding wouldn’t work anyway. The only way to enable routing between virtual appliances is to create a tunnel and run routing protocol and packet forwarding across that tunnel… but even there you’d hit a snag: Azure router forwards only TCP, UDP, and ICMP traffic, so you’d have to use a VXLAN tunnel.

Need more details? I already told you where to find them, and we covered numerous BYOA (Bring Your Own Appliance) designs in Networking in Public Cloud Deployments online course.

Ivan,

Insightful article. I do have a question though: why this statement: There is no L2 forwarding in Azure (major difference with AWS).

Is there also no L2 forwarding in AWS? Can you elaborate?

Forwarding within an AWS VPC subnet is based on destination MAC address (unicast only, no flooding), forwarding across subnets is based on destination IP address. You'll find an overview here...

https://blog.ipspace.net/2020/05/aws-networking-101.html

... and the expected deep dive in the AWS Networking webinar.

Another gotcha with Azure is "IP forwarding". If you expect an Azure NIC to emit any packets that have a non-default source IP (e.g. the case of virtual router appliance), you have to enable this feature, otherwise, the packets will get dropped on the egress. https://docs.microsoft.com/en-us/azure/virtual-network/virtual-network-network-interface#enable-or-disable-ip-forwarding

Hi, Can you comment on using eBGP multihop between NVA's in different subnets of an Azure vNet, or between peered vNet?s In theory this should work but I've had no success. I can get the peering up and routes are exchanged, appearing as "next hop (eBGP peer IP> recursive via <snet GW IP>" but without explicitly adding the destination network in the RT via the eBGP peer, traffic is not forwarded.

I would say the second diagram answers your question. Imagine an eBGP session between A and C and follow a packet between A and C every step of the way.