Complex Routing in Hyper-V Network Virtualization

The layer-3-only Hyper-V Network Virtualization forwarding model implemented in Windows Server 2012 R2 thoroughly confuses engineers used to deal with traditional layer-2 subnets connected via layer-3 switches.

As always, it helps to take a few steps back, focus on the principles, and the “unexpected” behavior becomes crystal clear.

2014-02-05: HNV routing details updated based on feedback from Praveen Balasubramanian. Thank you!

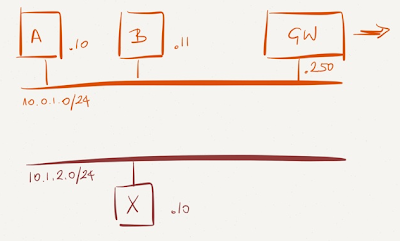

Sample network

Let’s start with a virtual network that’s a bit more complex than a single VLAN:

Next, assume the following connectivity requirements:

- VM A must use the gateway (GW - for example a Vyatta router-in-a-VM) to communicate with the outside world;

- VM B and VM X must communicate.

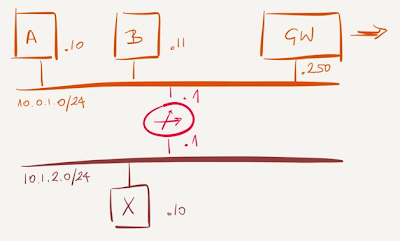

It’s obvious we need a router (aka L3 switch) to link the two segments otherwise B and X cannot communicate). In a traditional VLAN-based network you’d use a physical layer-3 switch with SVI/VLAN interfaces somewhere in the network to connect the two VLANs; you could use a VM-based router in a layer-2 overlay virtual network world like VXLAN.

There are at least two ways to achieve the desired connectivity in a traditional layer-2 world:

- Set the default gateway to router (.1) on B and X, and set the default gateway to .250 (GW) on A. Pretty bad design if I’ve ever seen one.

- Set the default gateway to router (.1) on all VMs (apart from the gateway VM) and configure a static default route pointing to .250 (GW) on the router.

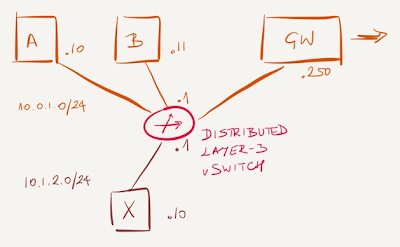

Distributed routing in Hyper-V Network Virtualization

Hyper-V Network Virtualization (HNV) doesn’t have layer-2 segments. Every VM is directly connected to the distributed layer-3 switch (implemented in all hypervisors). The virtual network topology thus looks like this:

Even though HNV documentation talks about subnets and prefixes, that’s not how the forwarding works. HNV forwarding works like a router with the same IP address on all interfaces (in the same subnet) having an equivalent of a host route (NetVirtualizationLookupRecord)for every connected VM.

After redrawing the diagram it’s obvious what needs to be configured to get the desired connectivity:

- Set the default gateway to the distributed router (.1) on all VMs;

- Configure a default route in the HNV environment with the New-NetVirtualizationCustomerRoute PowerShell cmdlet.

Furthermore, Windows Server 2012 R2 requires the external gateway to be in a separate subnet. The GW VM should be moved from 10.0.1.0/24 into a different subnet (for example, 10.0.2.0/24), resulting in the following Hyper-V network virtualization routing table:

| Prefix | Next-hop |

|---|---|

10.0.1.0/24 | On-link |

10.0.2.0/24 | On-link |

10.1.2.0/24 | On-link |

0.0.0.0/0 | 10.0.2.250 |

But I lost my precious security

I’m positive someone will start complaining at this point. In the pretty bad design I mentioned above VM B couldn’t communicate with the outside world (unless the router connecting the two segments had a default route pointing to GW VM), and it’s impossible to achieve the same effect with routing in HNV environment.

However, keep in mind that security through obscurity is never a good idea (told you it was a bad design), and there’s a good reason routers and layer-3 switches have ACLs. Speaking of ACLs, you can configure them in Hyper-V with the New-NetFirewallRule and since all VM ports are equivalent (there are no layer-2 and layer-3 ports) you get consistent results regardless of whether the source and destination VM are in the same or different subnets.

More information

If you’re struggling with the overlay virtual networking concepts, consider the Overlay Virtual Networking and Cloud Computing Networking webinars (both part of the Cloud Computing Webinar Roadmap and yearly subscription).

You can also engage me to help you design your network, review your design, or discuss a particularly nasty forwarding problem.

Also, nothing stops you from having more than one router running a FHRP in the virtual environment. Or from having another redundancy strategy. You should check out AWS's redundant NAT Instance design as an example of what kind of things you can do. And as far as security is concerned, again, nothing stops you from implementing a whole security stack in the virtual environment.