Blog Posts in October 2012

Setting NO-EXPORT BGP Community

A reader of my blog experienced problems setting no-export BGP community. Here’s a quick how-to guide (if you’re new to BGP, you might want to read BGP Communities and BGP and route maps posts first).

IPv6 RADIUS Accounting

Somehow I got involved in an IPv6 RADIUS accounting discussion. This is what I found to work in Cisco IOS release 15.2(4)S:

Coping with Holiday Traffic – Secondary DHCP Subnets

Years ago the IT of the organization I worked for assigned a /28 to my home office. It seemed enough; after all, who would ever have more than ~10 IP hosts at home (or more than four computers at a site).

When the number of Linux hosts and iGadgets started to grow, I occasionally ran out of IPv4 addresses, but managed to kludge my way around the problem by reducing DHCP lease time. However, when the start of school holidays coincided with the first snow storm of the season (so all the kids used their gadgets simultaneously) it was time to act.

VM-level IP Multicast over VXLAN

Dumlu Timuralp (@dumlutimuralp) sent me an excellent question:

I always get confused when thinking about IP multicast traffic over VXLAN tunnels. Since VXLAN already uses a Multicast Group for layer-2 flooding, I guess all VTEPs would have to receive the multicast traffic from a VM, as it appears as L2 multicast. Am I missing something?

Short answer: no, you’re absolutely right. IP multicast over VXLAN is clearly suboptimal.

Beware of the Pre-Bestpath Cost Extended BGP Community

One of my readers sent me an interesting problem a few days ago: the BGP process running on a PE-router in his MPLS/VPN network preferred an iBGP route received from another PE-router to a locally sourced (but otherwise identical) route. When I looked at the detailed printout, I spotted something “interesting” – the pre-bestpath cost extended BGP community.

The Best of Last Week’s IPv6 Summit

Last week’s IPv6 summit organized by Jan Žorž was probably one of the best events to attend for engineers interested in real-life IPv6 deployment experience. Some of the highlights included:

- IPv6: Past, Present and Future by Robert Hinden, one of the creators of IPv6;

- Cisco’s IPv6 deployment experiences by Andrew Yourtchenko, technical leader @ Cisco;

- IPv6 deployment in Yahoo by Jason Fesler, distinguished architect @ Yahoo;

- Lessons learned while deploying IPv6 in US Government by Ron Broersma, Network Security Manager @ SPAWAR;

- IPv6 implementation in Time Warner Cable by their director of technology development: Lee Howard of the CGN-is-too-expensive fame.

Enjoy! ... and thank you, Jan, for an excellent event.

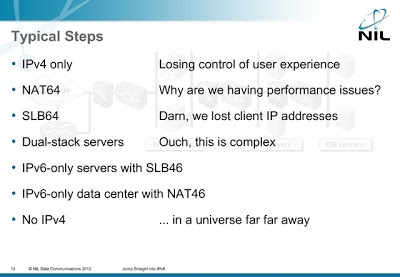

Skip the Transitions, Build IPv6-Only Data Centers

During last week’s IPv6 Summit I presented an interesting idea first proposed by Tore Anderson: let’s skip all the transition steps and implement IPv6-only data centers.

You can view the presentation or watch the video; for more details (including the description of routing tricks to get this idea working with vanilla NAT64), watch Tore’s RIPE64 presentation.

Is Layer-3 DCI Safe?

One of my readers sent me a great question:

I agree with you that L2 DCI is like driving without a seat belt. But is L3 DCI safer in case of DCI link failure? Let's say you have your own AS and PI addresses in use. Your AS spans multiple sites and there are external BGP peers on each site. What happens if the L3 DCI breaks? How will that impact your services?

Simple answer: while L3 DCI is orders of magnitude safer than L2 DCI, it will eventually fail, and you have to plan for that.

IPv6 First-Hop Security: Ideal OpenFlow Use Case

Supposedly it’s a good idea to be able to identify which one of your users had a particular IP address at the time when that source IP address created significant havoc. We have a definitive solution for the IPv4 world: DHCP server logs combined with DHCP snooping, IP source guard and dynamic ARP inspection. IPv6 world is a mess: read this e-mail message from v6ops mailing list and watch Eric Vyncke’s RIPE65 presentation for excruciating details.

If Something Can Fail, It Will

During a recent consulting engagement I had an interesting conversation with an application developer that couldn’t understand why a fully redundant data center interconnect cannot guarantee 100% availability.

Dear $Vendor, NETCONF != SDN

Some vendors feeling the urge to SDN-wash their products claim that the ability to “program” them through NETCONF (or XMPP or whatever other similar mechanism) makes them SDN-blessed.

There might be a yet-to-be-discovered vendor out there that creatively uses NETCONF to change the device behavior in ways that cannot be achieved by CLI or GUI configuration, but most of them use NETCONF as a reliable Expect script.

Disabling IP unreachables breaks pMTUd

A while ago someone sent me an interesting problem: the moment he enabled simple MPLS in his enterprise network with ip mpls interface configuration commands, numerous web applications stopped working. My first thought was “MTU problems” (the usual culprit), but path MTU discovery should have taken care of that.

Don’t use IPv6 RA on server LANs

Enabling IPv6 on a server LAN with the ipv6 address interface configuration without taking additional precautions might be a bad idea. All modern operating systems have IPv6 enabled by default, and the moment someone starts sending Router Advertisement (RA) messages, they’ll auto-configure their LAN interfaces.

Updated webinar roadmaps

The cloudy and cold autumn weather is the perfect backdrop for marketing-related work. I finally managed to update the webinar roadmaps: overview, data center, virtualization, IPv6 and VPN. And yes, you get immediate access to all that (over 46 hours of content) with the yearly subscription.

You MUST Take Control of IPv6 in Your Network

I’m positive most of you are way too busy dealing with operational issues to start thinking about IPv6 deployment (particularly if you’re working in the enterprise world; European service providers using the same “strategy” just got a rude wake-up call). Bad idea – if you ignore IPv6, it will eventually blow up in your face. Here’s how:

The best of RIPE65

Last week I had the privilege of attending RIPE65, meeting a bunch of extremely bright SP engineers, and listening to a few fantastic presentations (full meeting report @ RIPE65 web site).

I knew Geoff Huston would have a great presentation, but his QoS presentation was even better than I expected. I don’t necessarily agree with everything he said, but every vendor peddling QoS should be forced to listen to his explanation of the underlying problems and kludgy solutions first.