6WIND: Solving the Virtual Appliance Performance Issues

We all know that the performance of virtual networking appliances (firewalls, load balancers, routers ... running inside virtual machines) really sucks, right? Some vendors managed to offload the packet-intensive processing into the hypervisor kernel, getting way more bang for the buck, but that’s a pretty R&D-intensive undertaking.

We also know that The Real Men use The Real Hardware (ASICs and FPGAs) to get The Real Performance, right? Wrong!

Real Men use Real Hardware

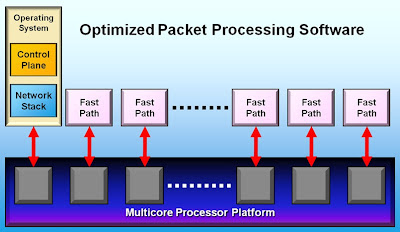

It turns out the generic Intel chipset is extremely powerful ... assuming you know how to use it, and that’s what 6WIND has been doing for the last decade – among other things, they have acquired extensive experience with the Intel Data Plane Development Kit and wrote a full-blown TCP/IP stack on top of it.

So, what’s the big deal? It turns out nobody (including VMware) ever wanted to spend too much time getting the networking part of servers and operating systems right, so we’re burdened with a lasagna-like hodgepodge of just-good-enough-to-work layers, from device drivers through the whole Linux TCP/IP stack.

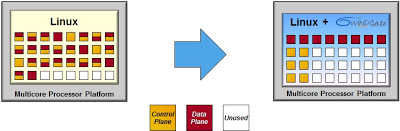

The 6WINDGate software complements the whole Linux networking stack with a high-performance multi-core implementation that supports all data-plane and control-plane protocols you’ll ever need (unless you’re still stuck with AppleTalk). Interestingly enough, they can coexist with the TCP/IP stack in the Linux kernel – you don’t have to patch or rebuild the kernel to improve the performance, the 6WINDGate software installs its own parallel stack and intercepts API calls (for example, Netlink calls).

Not surprisingly, you get amazing performance once you manage to squeeze the most out of the available hardware. They claim they can increase the performance of a Linux-based networking appliance by a factor of 10, and the performance scales linearly with the number of CPU cores allocated to run 6WINDGate fast path (which does make sense – packet/flow processing is a highly parallelized activity). They say they can share some performance results without an NDA – just contact them.

Summary: 6WIND claims you can get up to 10x more performance out of the existing commodity hardware by adding 6WINDGate stack to an existing bare-metal Linux-based appliance. But can they repeat that feat in the virtual space?

Entering the Virtual World

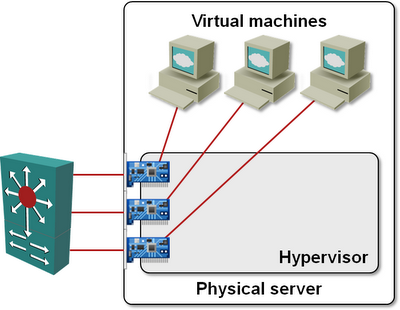

David Le Goff, 6WIND’s product manager, was kind enough to walk me through 6WIND’s recent Cloud Infrastructure announcement. Once you manage to get past the numerous layers of marketing messages, the solution is blindingly simple: if you want to have the performance you need, you have to bypass the hypervisor:

Step#1 – Install 6WINDGate software in the virtual appliance VM, and use your solution directly or integrate your own protocols with their fast path API;

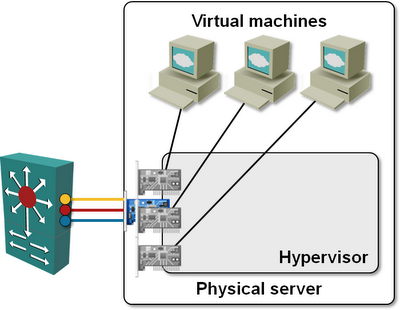

Step#2 – Bypass the virtual switch and connect the data-path NICs of the virtual machine directly to the physical NICs using VMDirectPath or a Xen/Hyper-V equivalent.

Obviously you need plenty of physical NICs in the server running the virtual appliances (or you use UCS blades with Palo chipset and Adapter FEX), and you can’t vMotion the appliance, but the performance differences should more than offset any inconveniences.

Tying physical NICs to guest VMs and bypassing the hypervisor vSwitch usually results in a loss of functionality – for example, you have to do your own VLAN tagging, using either the capabilities of the VM’s software, or by configuring a VLAN access port on the first-hop switch (external switch tagging – EST – in VMware lingo), but 6WINDGate does include VLAN- and MPLS tagging and GRE tunneling, and I wouldn’t mind a few extra configuration steps for a 10-time increase in performance.

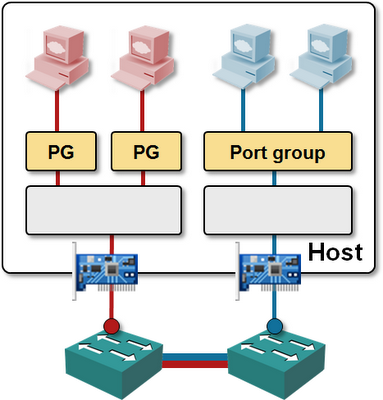

Finally, 6WIND can go one step further: if you have multiple VMs with 6WINDGate stack in the same hypervisor, they could bypass the hypervisor vSwitch. A VM using 6WINDGate can still communicate through vSwitch with other VMs or with the orchestration/management software while having high-speed communication with other VMs using 6WINDGate.

Intrigued? Interested? See a perfect fit between your cloudy needs and 6WIND’s software? Go talk to David.

Need more information?

- The diagrams in this blog post were taken from the VMware Networking Deep Dive webinar, which explains all the details of VMware networking and related virtual appliances.

- If you need a gentler introduction to virtualized networking world, watch the Introduction to Virtualized Networking or Cloud Computing Networking – Under the Hood webinars.

- Don’t forget: you get access to all these webinars (and numerous others) if you buy the yearly subscription.

- Finally: if you ever need a second opinion, in-depth technology review or design help, there’s the ExpertExpress service.

What about intercepting 6W to 6W communications, for example, for IPS/IDS purposes?

Good question!

Actually we have all the open & standard APIS to integrate with whatever OSS you use for network communication troubleshooting or security purposes . Meanwhile, working with some of those particular layers to hairpin the logs.

Thanks!

There's another company working on similar kinds of network acceleration. Linerate Systems. http://lineratesystems.com/

Anyway, if VM traffic is being funneled through a 6WIND virtual appliance of sorts, then this gets interesting. I imagine such an appliance would need to run on every host, sort of like an extension of the virtual switch. I'm seen that setup before where each host has multiple vSwiches, one with physical uplinks and the appliance, and the other with the appliance (providing switching/routing capabilities) and the production VMs.

Does this setup interfere with support for features such as vMotion/live migration?

Obviously having direct connectivity between VM and physical HW (NIC) breaks VM mobility.

A 6WIND appliance would be a cool idea, but would still require a 6WIND stack in the "fast path" VMs. A decent stack in the hypervisor would be the right solutions ;)

There are 2 concerns with SW appliances run over virtualization:

- guest OS kernel over multicore does not scale linearly.

- hypervisor kernel brings performance penalties as well.

We bring performance improvments there. Obviously vMotion is maybe more difficult to implement but the question is do you need virtual appliance vmotioned when those VA could (and should) be HA implemented?

An use case with 15VMs protected by a virtual firewall on a host would require only one 6WINDgate stack on this vFirewall VA, that's all. We do not touch to the other VMs. :)

Hope it is more clear!

http://www.tilera.com/products/processors/TILE-Gx_Family

and they are supported by ... 6Wind !

http://www.tilera.com/about_tilera/press-releases/6wind-releases-packet-processing-software-optimized-tilera%E2%80%99s-tilepro64-p