Blog Posts in November 2009

HQF: intra-class WFQ ignores the IP precedence

Continuing my tests of the Hierarchical Queuing Framework, I’ve tested whether the fair queuing works similarly to the previous IOS implementations, where the high-precedence sessions got proportionally more bandwidth.

Summary: Fair queuing within HQF ignores IP precedence.

Dual-stack PPP requires two separate sessions?

A while ago a senior Service Provider network designer told me that they have serious issues with IPv6 deployment as IPv6 requires a separate PPPoE session from the CPE devices which significantly increases their licensing costs. The statement really surprised me; PPP was designed to be a multi-protocol environment and it’s very easy to configure IPv4 and IPv6 over a single PPP session in Cisco IOS. I think I might have tracked down the source of this “information” to the 6deploy IPv6 and DSL presentation which states on Page 11 that “Separate PPP sessions are established between the Subscriber’s systems (or CPE) and the BBRAS for IPv6 and IPv4 traffic”.

Being too Cisco-centric, I cannot figure out whether this claim has any merit, as it clearly does not apply to Cisco IOS. Are there really boxes out there that are so stupid that they cannot run two protocols across a single PPPoE session?

“ip ospf mtu-ignore” Is a Dangerous Command

A while ago, I wrote about the problems caused by MTU mismatch between OSPF neighbors and warned that the ip ospf mtu-ignore interface configuration command that supposedly solves the problem could cause significant headaches.

These are the router configurations I’ve used to generate the problem described in this blog post.

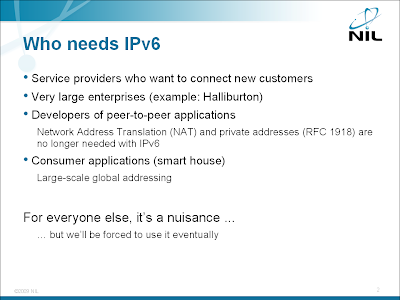

Who Needs IPv6?

One of the most common questions asked by my enterprise customers is “Who needs IPv6?” Since IPv6 does not add any significant new functionality (apart from larger address space), you can’t gain much by deploying it in an enterprise network … unless you’re huge enough that the private IPv4 address space (RFC 1918) becomes too confining for you. A good case study is Halliburton; you’ll find the details in Global IPv6 Strategies: From Business Analysis to Operational Planning book (my review).

Detect short bursts with EEM

Last week I’ve described how you can use EEM to detect long-term interface congestion which could indicate denial-of-service attack. The mechanism I’ve used (the averaged interface load) is pretty slow; using the lowest possible value for the load-interval (30 seconds) it takes almost a minute to detect a DOS attack (see below).

If you want to detect outbound bursts, you can do better: you can monitor the increase in the number of output drops over a short period of time.

HQF: intra-class fair queuing

Continuing from my first excursion into the brave new world of HQF, I wanted to check how well the intra-class fair queuing works. I’ve started with the same testbed and router configurations as before and configured the following policy-map on the WAN interface:

policy-map WAN

class P5001

bandwidth percent 20

fair-queue

class P5003

bandwidth percent 30

class class-default

fair-queue

The test used this background load:

| Class | Background load |

|---|---|

| P5001 | 10 parallel TCP sessions |

| P5003 | 1500 kbps UDP flood |

| class-default | 1500 kbps UDP flood |

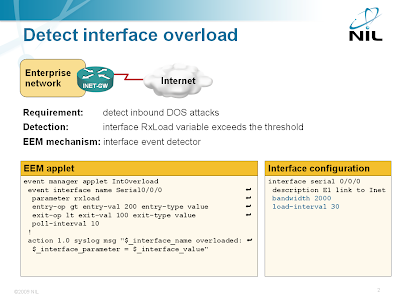

Detect DoS Attacks with EEM

Someone sent me an interesting question a while ago: “is it possible to detect DOS flooding with an EEM applet?” Of course it is (assuming the DOS attack results in very high load on the Internet-facing interface) and the best option is the EEM interface event detector.

Detecting interface overload with EEM

The interface event detector is more user-friendly than the SNMP event detector. You can specify interface name and parameter name in the interface event detector; with SNMP event detector you have to specify SNMP object identifier (OID). The interface event detector stores the interface name, measured parameter name and its value in three convenient environment variables that you can use to generate syslog messages or alert the operators via e-mail.

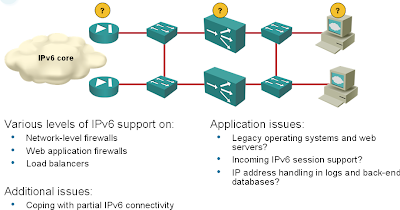

IPv6 in the Data Center: is Cisco ready?

With the recent Cisco’s push into the Data Center environment and all the (not so very unreasonable) fuss around IPv4 address depletion and imminent need for IPv6, I wanted to check whether an all-Cisco shop could do the first step: deploy IPv6 on Internet-facing production servers. If you follow the various design guidelines, your setup will have at least the following elements (and I bet someone from Cisco has already told you that you also need XML firewall, Ironport and WAAS appliance):

Now let’s see how well these boxes support IPv6.

I’m describing the Data Center IPv6 deployment issues in the Enterprise IPv6 Deployment workshop. The diagram above was taken straight from the workshop materials.

First HQF impressions: excellent job

Several readers told me that the Hierarchical Queuing Framework introduced in IOS releases 12.4(20)T and 15.0 (why do I always have the urge to write 12.5?) works much better than CB-WFQ. After spending several hours trying to break HQF, I have to concur with them: Cisco’s engineers did a splendid job. However, the HQF behavior might be slightly counterintuitive to those that became too familiar with CB-WFQ.

Solution: Bandwidth+Police actions in CB-WFQ

Most of the respondents to my last week’s challenge got it almost right. The minor (common) error was the assumption that police rate percent 50 would result in a TCP session getting 50% of the bandwidth. Eyal got that right: the TCP throughput is always significantly lower than that due to frequent drops caused by low burst sizes assumed by the police command and resulting TCP restarts (the most I was able to push through was around 90 kbps; half of the bandwidth would be 128 kbps).

ITU: Grabbing a Piece of the IPv6 Pie?

ITU (the organization formerly known as CCITT) is having a bit of a relevance problem these days: its flagship technological achievements, including X.25, ISDN, ATM, and SDH, are dead or headed toward oblivion … and a former pariah, a group of geeks, is stealing the show and rolling out the Internet. No wonder their bureaucrats are having a hard time figuring out how to justify their existence. For years they’ve been lamenting how much they’ve contributed to the Internet (highly recommended reading for Monty Python fans) and how their precious contributions were unacknowledged. Now they came forth with a “wonderful” idea: the history of IPv4 address allocation proves that the wealthy nations and early adopters managed to grab disproportionate parts of the IPv4 address space (well, that’s true), so they made it their mission to protect the poor and underdeveloped countries in the brave new IPv6 world. In short, they want to become an independent address allocation entity (RIR). It looks like another worldwide bureaucracy is precisely what we need on top of all the other problems we have with IPv6 deployment.

Challenge: CB-WFQ Bandwidth+Police behavior

I have to admit I was somewhat surprised by the lab test results I’ve published in my previous CB-WFQ post. It looks like we’ve been fed misleading information about (classic) CB-WFQ behavior for years.

Don’t tell me that things are completely different with HQF implemented in IOS releases 12.4(late)T and 15.0. I know that … but 95+% of the installed base do not use those releases.

Let’s see whether you can figure out what my next lab test results showed. I’ve been running three parallel TTCP sessions on ports 5001, 5002 and 5003 across a 256 kbit point-to-point link. Here’s the relevant part of my router configuration:

Off-topic: universal engineers

Decades ago when I was still in high school and working on a programming project during the summer break, an IT old-timer gave me the following bit of advice: “Remember, God created professions so that everyone could do the job he’s qualified to do”. It took me years before I understood what he had been trying to tell me, but this seems to be an industry-wide disease. Judging by some of the e-mails I receive a lot of people who are proficient in other IT specialties think they can configure the routers with a little help of good uncle Google and free support from fellow bloggers.

It seems the “ability” of a “generic” IT employee to tackle any problem somewhat related to IT is also unique to our industry. Last week a woodworker was installing my kitchen and flatly refused to connect the electric cable of the ceramic cooktop to the wall outlet citing potential liabilities (please remember: I’m not living in US but in Central Europe). An HTML programmer asked to configure the enterprise firewall might not be so reluctant. Why do you think some people in our industry believe they are universal engineers?

CB-WFQ misconceptions

Reading various documents describing Class-Based Weighted-Fair-Queueing (CB-WFQ) one gets the impression that the following configuration …

class-map match-all High

match access-group name High

!

policy-map WAN

class High

bandwidth percent 50

!

interface Serial0/1/0

bandwidth 256

service-policy output WAN

!

ip access-list extended High

permit ip any host 10.0.3.1

permit ip host 10.0.3.1 any

… allocates 128 kbps to the traffic to/from IP host 10.0.3.1 and distributes the remaining 128 kbps fairly between conversations in the default class.

I am overly familiar with weighted fair queuing (I was developing QoS training for Cisco when WFQ just left the drawing board) and was thus always wondering how they manage to implement that behavior with WFQ structures. A comment made by Petr Lapukhov re-triggered my curiosity and prompted me to do some actual lab tests.

The answer is simple: CB-WFQ does not work as advertised.

Book review: Foundation of Green IT

If you want to understand the real impact of the recent Data Center hype without getting pulled into the technology morass the vendors so copiously spread in their white papers, read the Foundation of Green IT book published by Prentice Hall. Its author, Marty Poniatowski, uses two case studies to illustrate enormous savings that can be realized through server and storage consolidation. I loved the first half of the book: the author avoids the technology issues (I loved the introduction to RAID: “I do not cover RAID background … the Internet has a wealth of information on RAID”) and uses real-life data gathered in actual project to illustrate the savings. Each case study has several chapters, ranging from starting point discovery through implementation plans and ROI analysis; exactly what you need if you’re considering going down the data center redesign path. The “Desktop Virtualization” and “Data Replication and Disk Technology Advancements” chapters are thrown in for good measure.

The author makes the server and storage consolidation case studies even more interesting by describing actual products/solutions and inserting screenshots of actual reports throughout the text. Not surprisingly, he’s describing what he knows best: HP servers, EMC storage and VMware virtualization; a clear indication how far Cisco has to go to win the hearts and minds of the data center market.