Category: Azure

Public Cloud Networking Hands-On Exercises

I got this request from someone who just missed the opportunity to buy the ipSpace.net subscription (or so he claims) earlier today

I am inspired to learn AWS advanced networking concepts and came across your website and webinar resources. But I cannot access it.

That is not exactly true. I wrote more than 4000 blog posts in the past, and some of them dealt with public cloud networking. There are also the free videos, some of them addressing public cloud networking.

Feedback: Microsoft Azure Networking

Numerous networking engineers found my cloud webinars (AWS, Azure) useful when preparing for a cloud migration project. Here’s what one of them wrote:

We are beginning to migrate some of our offerings to Microsoft Azure and I need to get up to speed with Azure products. I found this webinar very informative, and Ivan explained the concepts in a clear manner and easy to follow along. I would recommend watching these webinars and then read Microsoft documentation to get a thorough understanding.

Want to have some hands-on work sprinkled on top of that? You’ll find deployment examples in the Networking in Public Clouds GitHub repository.

Azure Networking Update Is Completed

I planned to write a few interesting blog posts last week, but then got sucked into updating Azure Networking webinar. At least I got that completed 😊; the webinar materials now include these new Azure services:

I also added descriptions of numerous new features:

Azure Networking Update (Phase 1)

Last week I completed the first part of the annual Azure Networking update. The Azure Firewall section is already online; hope you’ll find it useful. I already have the materials for the Private Link and Gateway Load Balancer services, but haven’t decided whether to schedule another live session to cover them, or just create a short video.

Then there are a half-dozen smaller things I found while processing a year worth of Azure networking News. You’ll find them (and links to documentation) in New Azure Services and Features document.

Feedback: Azure Networking

When I started developing AWS- and Azure Networking webinars, I wondered whether they would make sense – after all, you can easily find tons of training offerings focused on public cloud services.

However, it looks like most of those materials focus on developers (no wonder – they are the most significant audience), with little thought being given to the needs of network engineers… at least according to the feedback left by one of ipSpace.net subscribers.

Feedback: Microsoft Azure Networking

Azure and AWS have decent documentation (I always found it relatively easy to figure out what they’re doing), but what they implemented is sometimes so far away from what we’re used to that it’s hard to bridge the gap. Here’s how Olle Wilhelmsson solved that challenge:

I would just like to send a huge thank you, I’ve been a fan of your appearances on tech field day as a voice of reason, and different podcasts all around. Happy to finally be able to contribute and purchase an IPspace subscription, and was not disappointed.

This series on Azure networking was fantastic, it’s been frustrating to find any kind of good material on this topic. Even if Microsofts documentation is generally good, they really don’t have any resources to compare it to “regular” networking in physical equipment. So just a huge thank you, this has definitely saved me countless hours of reading and googling questions!

Microsoft Azure: Remember Exchange Server?

Recently I joked there’s significant difference between AWS and Azure launching features:

- AWS launches a production-ready feature that you can consume the next day.

- Azure launches a preview that might work in 6 months.

Those with long enough memories shouldn’t be surprised. It’s not the first time Microsoft is using the same tactics.

Relative Speed of Public Cloud Orchestration Systems

When I was complaining about the speed (or lack thereof) of Azure orchestration system, someone replied “I tried to do $somethingComplicated on AWS and it also took forever”

Following the “opinions are great, data is better” mantra (as opposed to “never let facts get in the way of a good story” supposedly practiced by some podcasters), I decided to do a short experiment: create a very similar environment with Azure and AWS.

I took simple Terraform deployment configuration for AWS and Azure. Both included a virtual network, two subnets, a route table, a packet filter, and a VM with public IP address. Here are the observed times:

Hands-On: Azure Route Server

TL&DR: Azure Route Server works as advertised. Setting it up is excruciatingly slow. You might want to start the process just before taking a long lunch break.

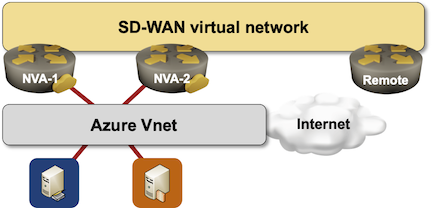

I decided to take Azure Route Server for a ride. Simple setup, two Networking Virtual Appliance (NVA) instances running Quagga to advertise a single prefix (just to see how multipathing works).

Here’s the diagram of what I set up:

Azure Route Server: Behind the Scenes

Last week I described the challenges Azure Route Server is supposed to solve. Now let’s dive deeper into how it’s implemented and what those implementation details mean for your design.

The whole thing looks relatively simple:

Azure Route Server: The Challenge

Imagine you decided to deploy an SD-WAN (or DMVPN) network and make an Azure region one of the sites in the new network because you already deployed some workloads in that region and would like to replace the VPN connectivity you’re using today with the new shiny expensive gadget.

Everyone told you to deploy two SD-WAN instances in the public cloud virtual network to be redundant, so this is what you deploy:

Why Is Public Cloud Networking So Different?

A while ago (eons before AWS introduced Gateway Load Balancer) I discussed the intricacies of AWS and Azure networking with a very smart engineer working for a security appliance vendor, and he said something along the lines of “it shows these things were designed by software developers – they have no idea how networks should work.”

In reality, at least some aspects of public cloud networking come closer to the original ideas of how IP and data-link layers should fit together than today’s flat earth theories, so he probably wanted to say “they make it so hard for me to insert my virtual appliance into their network.”

Renumbering Public Cloud Address Space

Got this question from one of the networking engineers “blessed” with rampant clueless-rush-to-the-cloud.

I plan to peer multiple VNet from different regions. The problem is that there is not any consistent deployment in regards to the private IP subnets used on each VNet to the point I found several of them using public IP blocks as private IP ranges. As far as I recall, in Azure we can’t re-ip the VNets as the resource will be deleted so I don’t see any other option than use NAT from offending VNet subnets to use my internal RFC1918 IPv4 range. Do you have a better idea?

The way I understand Azure, while you COULD have any address range configured as VNet CIDR block, you MUST have non-overlapping address ranges for VNet peering.

EVPN Control Plane in Infrastructure Cloud Networking

One of my readers sent me this question (probably after stumbling upon a remark I made in the AWS Networking webinar):

You had mentioned that AWS is probably not using EVPN for their overlay control-plane because it doesn’t work for their scale. Can you elaborate please? I’m going through an EVPN PoC and curious to learn more.

It’s safe to assume AWS uses some sort of overlay virtual networking (like every other sane large-scale cloud provider). We don’t know any details; AWS never felt the need to use conferences as recruitment drives, and what little they told us at re:Invent described the system mostly from the customer perspective.

Which Public Cloud Should I Master First?

I got a question along these lines from a friend of mine:

Google recently announced a huge data center build in country to open new GCP regions. Does that mean I should invest into mastering GCP or should I focus on some other public cloud platform?

As always, the right answer is “it depends”, for example: