Why Can't We Have Good Documentation

Daniel Dib asked a sad question on LinkedIn:

Where did all the great documentation go?

In more detail:

There was a time when documentation answered almost all questions:

- What is the thing?

- What does the thing do?

- Why would you use the thing?

- How do you configure the thing?

I’ve seen the same thing happening in training, and here’s my cynical TL&DR answer: because the managers of the documentation/training departments don’t understand the true value of what they’re producing and thus cannot justify a decent budget to make it happen.

netlab: Embed Configuration Templates in a Lab Topology File

A few days ago, I described how you can use the new config.inline functionality to apply additional configuration commands to individual devices in a netlab-powered lab.

However, sometimes you have to apply the same set of commands to several devices. Although you could use device groups to do that, netlab release 25.09 offers a much better mechanism: you can embed custom configuration templates in the lab topology file.

netlab 25.10: Cisco 8000v, Nicer Graphs

netlab release 25.10 includes:

- Support for container version of Cisco 8000v emulator (finally a reasonable IOS-XR platform)

- Support for vJunosEVO (vPTX) release 24+ (it needs UEFI BIOS), thanks to Aleksey Popov and Stefano Sasso

- Wildcards or regular expressions in group- or as_list members.

- Graphing improvements

- OSPFv2/v3 on OpenBSD thanks to Remi Locherer

- OSPFv2/v3 interface parameters on IOS XR

You’ll find more details in the release notes.

Troubleshooting Multi-Pod EVPN: Overview

An engineer reading my multi-pod EVPN article asked an interesting question:

How do you handle troubleshooting when VTEPs cannot reach each other across pods?

The ancient Romans already knew the rough answer: divide and conquer.

In this particular case, the “divide” part starts with a simple realization: VXLAN/EVPN is just another application running on top of IP.

Changes in ipSpace.net RSS Feeds

TL&DR: You shouldn’t see any immediate impact of this change, but I’ll eventually clean up old stuff, so you might want to check the URLs if you use RSS/Atom feeds to get the list of ipSpace.net blog posts or podcast episodes. The (hopefully) final URLs are listed on this page.

Executive Summary: I cleaned up the whole ipSpace.net RSS/Atom feeds system. The script that generated the content for various feeds has been replaced with static Hugo-generated RSS/Atom feeds. I added redirects for all the old stuff I could find (including ioshints.blogspot.com), but I could have missed something. The only defunct feed is the free content feed (which hasn’t changed in a while, anyway), as it required scanning the documents database. You can use this page to find the (ever-increasing) free content.

And now for the real story ;)

Working for a Vendor with David Gee

When I first met David Gee, he worked for a large system integrator. A few years later, he moved to a networking vendor, worked for a few of them, then for a software vendor, and finally decided to start his own system integration business.

Obviously, I wanted to know what drove him to make those changes, what lessons he learned working in various parts of the networking industry, and what (looking back with perfect hindsight) he would have changed.

Spaghetti Pasta Networking

Here’s an interesting data point in case you ever wondered why things are getting slower, even though the CPU performance is supposedly increasing. Albert Siersema sent me a link to a confusing implementation of spaghetti networking.

It looks like they’re trying to solve the how do I connect two containers (network namespaces) without having the privilege to create a vEth pair challenge with plenty of chewing gum and duct tape tap interfaces 🤦♂️

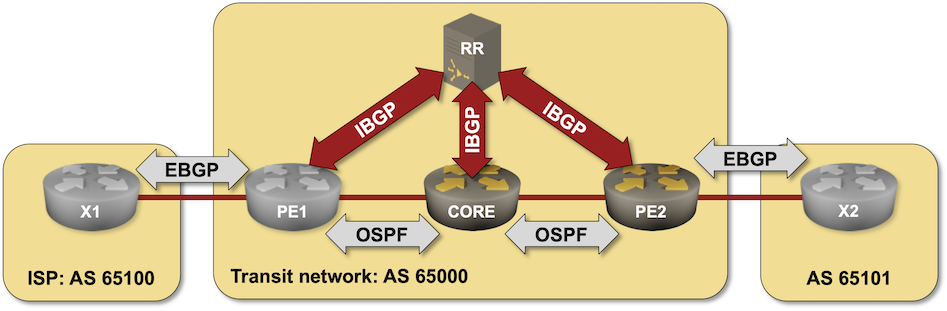

Using BIRD BGP Daemon as a BGP Route Reflector

In this challenge lab, you’ll configure a BIRD daemon running in a container as a BGP route reflector in a transit autonomous system. You should be familiar with the configuration concepts if you completed the IBGP lab exercises, but will probably struggle with BIRD configuration if you’re not familiar with it.

Click here to start the lab in your browser using GitHub Codespaces (or set up your own lab infrastructure). After starting the lab environment, change the directory to challenge/01-bird-rr, build the BIRD container with netlab clab build bird if needed, and execute netlab up.

netlab: Applying Simple Configuration Changes

For years, netlab has had custom configuration templates that can be used to deploy custom configurations onto lab devices. The custom configuration templates can be Jinja2 templates, and you can create different templates (for the same functionality) for different platforms. However, using that functionality if you need an extra command or two makes approximately as much sense as using a Kubernetes cluster to deploy a BusyBox container.

netlab release 25.09 solves that problem with the files plugin and the inline config functionality.

EVPN Designs: Multi-Pod Fabrics

In the EVPN Designs: Layer-3 Inter-AS Option A, I described the simplest multi-site design in which the WAN edge routers exchange IP routes in individual VRFs, resulting in two isolated layer-2 fabrics connected with a layer-3 link.

Today, let’s explore a design that will excite the True Believers in end-to-end layer-2 networks: two EVPN fabrics connected with an EBGP session to form a unified, larger EVPN fabric. We’ll use the same “physical” topology as the previous example; the only modification is that the WA-WB link is now part of the underlay IP network.