Blog Posts in June 2021

Thank You for Everything Irena, We'll Miss You Badly

In February 2018, Irena Marčetič joined ipSpace.net to fix the (lack of) marketing. After getting that done, she quickly took over most of sales, support, logistics, content production, guest speaker coordination… If you needed anything from us in the last few years, it was probably Irena answering your requests and helping you out.

She did a fantastic job and transformed ipSpace.net from Ivan and an occasional guest speaker to a finely tuned machine producing several hours of new content every month. She organized our courses, worked with guest speakers, podcast guests and hosts, participated in every guest speaker webinar to take notes for the editing process, managed content editing, watched every single video we created before it was published to make sure the audio was of acceptable quality and all the bloopers were removed… while answering crazy emails like I need you to fill in this Excel spreadsheet with your company data because I cannot copy-paste that information from your web site myself and solving whatever challenges our customers faced.

Unfortunately, Irena decided to go back to pure marketing and is leaving ipSpace.net today. Thanks a million for all the great work – we’ll badly miss you.

Webinars in the First Half of 2021

It’s time for another this is what we did in the last six months blog post. Instead of writing another wall-of-text, I just updated the one I published in early January. Here are the highlights:

- Completed webinars: Kubernetes Networking Deep Dive, Cisco ACI Deep Dive

- Totally unplanned: AI/ML in Networking

- New content in existing webinars: NSX-T Federation, Leaf-and-Spine routing designs, deep dive into reliability theory, AWS Gateway Load Balancer and Network Firewall, Azure Virtual WAN, multi-vendor data center EVPN deployments, networking part of Introduction to Cloud Computing webinar.

- Updated content: configuration and state management automation tools.

- Work-in-progress: Network Automation Concepts

That’s about it for the first half of 2021. I’ll be back in early September.

Worth Reading: Blog About What You've Struggled With

Some of the best blog posts I’ve read described a solution (and the process to get there) someone reached after a lot of struggle.

As always, Julia Evans does a wonderful job explaining that in exquisite details.

Worth Reading: How to Miss a Deadline

TL&DR: If you’re about to miss a deadline, be honest about it, and tell everyone well in advance.

I wish some of the project managers I had the “privilege” of working with would use 1% of that advice.

Video: Typical Large-Scale Bridging Use Cases

In the previous video in the Switching, Routing and Bridging section of How Networks Really Work webinar we compared transparent bridging with IP routing. Not surprisingly (given my well-known bias toward stable solutions) I recommended using IP routing as much as possible, but there are still people out there pushing large-scale transparent bridging solutions.

In today’s video we’ll look at some of the supposed use cases and stable solutions you could use instead of stretching a virtual thick yellow cable halfway across a continent.

Stretched VLANs: What Problem Are You Trying to Solve?

One of ipSpace.net subscribers sent me this interesting question:

I am the network administrator of a small data center network that spans 2 buildings. The main building has a pair of L2/L3 10G core switches. The second building has a stack of access switches connected to the main building with 10G uplinks. This secondary datacenter has got some ESX hosts and NAS for remote backup and some VM for development and testing, but all the Internet connection, firewall and server are in the main building.

There is no routing in the secondary building and most of the VLANs are stretched. Do you think I must change that (bringing routing to the secondary datacenter), or keep it simple like it is now?

As always, it depends, this time on what problem are you trying to solve?

Why Do We Need BGP-LS?

One of my readers sent me this interesting question:

I understand that an SDN controller needs network topology information to build traffic engineering paths with PCE/PCEP… but why would we use BGP-LS to extract the network topology information? Why can’t we run OSPF with controller by simulating a software based OSPF instance in every area to get topology view?

There are several reasons to use BGP-LS:

Unexpected Interactions Between OSPF and BGP

It started with an interesting question tweeted by @pilgrimdave81

I’ve seen on Cisco NX-OS that it’s preferring a (ospf->bgp) locally redistributed route over a learned EBGP route, until/unless you clear the route, then it correctly prefers the learned BGP one. Seems to be just ooo but don’t remember this being an issue?

Ignoring the “why would you get the same route over OSPF and EBGP, and why would you redistribute an alternate copy of a route you’re getting over EBGP into BGP” aspect, Peter Palúch wrote a detailed explanation of what’s going on and allowed me to copy into a blog post to make it more permanent:

Comparing EVPN with Flood-and-Learn Fabrics

One of ipSpace.net subscribers sent me this question after watching the EVPN Technical Deep Dive webinar:

Do you have a writeup that compares and contrasts the hardware resource utilization when one uses flood-and-learn or BGP EVPN in a leaf-and-spine network?

I don’t… so let’s fix that omission. In this blog post we’ll focus on pure layer-2 forwarding (aka bridging), a follow-up blog post will describe the implications of adding EVPN IP functionality.

Worth Reading: Machine Learning Deserves Better Than This

This article is totally unrelated to networking, and describes how medical researchers misuse machine learning hype to publish two-column snake oil. Any correlation with AI/ML in networking is purely coincidental.

Worth Reading: Is Your Consultant a Parasite?

Stumbled upon a must-read article: Is Your Consultant a Parasite?

For an even more snarky take on the subject, enjoy the Ten basic rules for dealing with strategy consultants by Simon Wardley.

Video: Comparing Routing and Bridging

After covering the basics of transparent Ethernet bridging and IP routing, we’re finally ready to compare the two. Enjoy the ride ;)

Questions about BGP in the Data Center (with a Whiff of SRv6)

Henk Smit left numerous questions in a comment referring to the Rethinking BGP in the Data Center presentation by Russ White:

In Russ White’s presentation, he listed a few requirements to compare BGP, IS-IS and OSPF. Prefix distribution, filtering, TE, tagging, vendor-support, autoconfig and topology visibility. The one thing I was missing was: scalability.

I noticed the same thing. We kept hearing how BGP scales better than link-state protocols (no doubt about that) and how you couldn’t possibly build a large data center fabric with a link-state protocol… and yet this aspect wasn’t even mentioned.

… updated on Friday, June 18, 2021 15:46 UTC

Deploying Plug-and-Pray Software in Large-Scale Networks

One of my readers sent me a sad story describing how Chromium service discovery broke a large multicast-enabled network.

The last couple of weeks found me helping a customer trying to find and resolve a very hard to find “network performance” issue. In the end it turned out to be a combination of ill conceived application nonsense and a setup with a too large blast radius/failure domain/fate sharing. The latter most probably based upon very valid decisions in the past (business needs, uniformity of configuration and management).

… updated on Monday, July 12, 2021 17:46 UTC

OSPF Inter-Process Route Selection

The traditional wisdom claimed that a Cisco IOS router cannot compare routes between different OSPF routing processes. The only parameter to consider when comparing routes coming from different routing processes is the admin distance, and unless you change the default admin distance for one of the processes, the results will be random.

Following Vladislav’s comment to a decade-old blog post, I decided to do a quick test, and found out that code changes tend to invalidate traditional wisdom. OSPF inter-process route selection is no exception. That’s why it’s so stupid to rely on undefined behavior in your network design, memorize such trivia, test the memorization capabilities in certification labs, or read decades-old blog posts describing arcane behavior.

ipSpace.net Subscription for System Administrators

One of our subscribers sent me this question:

I am a system administrator working primarily on server/storage virtualization. How would you recommend I take full advantage of the subscription while not being in networking full-time?

Let’s start with the webinars focused on technologies and fundamentals:

- If you’re interested in networking fundamentals, go through the first part of How Networks Really Work — stop when you feel it’s turning into a deep dive.

- As a sysadmin, you probably work within a data center environment. Data Center Infrastructure for Networking Engineers is another fundamentals-focused webinar worth exploring.

- Involved in multi-site DC deployments? Check out the Data Center Interconnects and Designing Active-Active and Disaster Recovery Data Centers.

- On the storage side, there’s Hyper-Converged Infrastructure Deep Dive and The Network Impact of NVMe over Fabrics (NVMe-oF).

Intricate AWS IPv6 Direct Connect Challenges

In his Where AWS IPv6 networking fails blog post, Jason Lavoie documents an intricate consequence of 2-pizza-teams not talking to one another: it’s really hard to get IPv6 in AWS VPC working with Transit Gateway and Direct Connect in large-scale multi-account environment due to the way IPv6 prefixes are propagated from VPCs to Direct Connect Gateway.

It’s one of those IPv6-only little details that you could never spot before stumbling on it in a real-life deployment… and to make it worse, it works well in IPv4 if you did proper address planning (which you can’t in IPv6).

Worth Reading: The Lost Designer

Scott Berkun published another interesting article: The Lost Designer. As always, replace designer with networking engineer and enjoy.

Lessons Learned: Technology Still Matters

In June 2020, a friend asked me to do a short presentation on lessons learned during my 35 years as a networking engineer. It went reasonably well, so I decided to turn it into a webinar, starting with regardless of what the disruptive marketers tell you, technology still matters.

… updated on Monday, July 12, 2021 18:00 UTC

Unnumbered Ethernet Interfaces, DHCP Edition

Last week we explored the basics of unnumbered IPv4 Ethernet interfaces, and how you could use them to save IPv4 address space in routed access networks. I also mentioned that you could simplify the head-end router configuration if you’re using DHCP instead of per-host static routes.

Obviously you’d need a smart DHCP server/relay implementation to make this work. Simplistic local DHCP server would allocate an IP address to a client requesting one, send a response and move on. Likewise, a DHCP relay would forward a DHCP request to a remote DHCP server (adding enough information to allow the DHCP server to select the desired DHCP pool) and forward its response to the client.

Real-Life Network-as-a-Graph Examples

After reading the Everything Is a Graph blog post, Vadim Semenov sent me a long list of real-life examples (slightly edited):

I work in a big enterprise and in order to understand a real packet path across multiple offices via routers and firewalls (when mtr or traceroute don’t work – they do not show firewalls), I made OSPF network visualization based on LSDB output. The idea is quite simple – save information about LSA1 and LSA2 (LSA5 optionally) and that will be enough in order to build a graph (use show ip ospf database router/network on Cisco devices).

Unequal-Cost Multipath with BGP DMZ Link Bandwidth

In the previous blog post in this series, I described why it’s (almost) impossible to implement unequal-cost multipathing for anycast services (multiple servers advertising the same IP address or range) with OSPF. Now let’s see how easy it is to solve the same challenge with BGP DMZ Link Bandwidth attribute.

I didn’t want to listen to the fan noise generated by my measly Intel NUC when simulating a full leaf-and-spine fabric, so I decided to implement a slightly smaller network:

Feedback: Azure Networking

When I started developing AWS- and Azure Networking webinars, I wondered whether they would make sense – after all, you can easily find tons of training offerings focused on public cloud services.

However, it looks like most of those materials focus on developers (no wonder – they are the most significant audience), with little thought being given to the needs of network engineers… at least according to the feedback left by one of ipSpace.net subscribers.

Worth Reading: The Neuroscience of Busyness

In the Neuroscience of Busyness article, Cal Newport describes an interesting phenomenon: when solving problems, we tend to add components instead of removing them.

If that doesn’t describe a typical network (or protocol) design, I don’t know what does. At least now we have a scientific basis to justify our behavior ;)

Worth Reading: Switching to IP fabrics

Namex, an Italian IXP, decided to replace their existing peering fabric with a fully automated leaf-and-spine fabric using VXLAN and EVPN running on Cumulus Linux.

They documented the design, deployment process, and automation scripts they developed in an extensive blog post that’s well worth reading. Enjoy ;)

Video: Cisco SD-WAN Policy Design

In the final video in his Cisco SD-WAN webinar, David Penaloza discusses site ID assignments and policy processing order.

A carefully planned site scheme and ordered list of policy entries will save you complications and headaches when deploying the SD-WAN solution.

Routing Protocols: Use the Best Tool for the Job

When I wrote about my sample OSPF+BGP hands-on lab on LinkedIn, someone couldn’t resist asking:

I’m still wondering why people use two routing protocols and do not have clean redistribution points or tunnels.

Ignoring for the moment the fact that he missed the point of the blog post (completely), the idea of “using tunnels or redistribution points instead of two routing protocols” hints at the potential applicability of RFC 1925 rule 4.

Unnumbered Ethernet Interfaces

Imagine an Internet Service Provider offering Ethernet-based Internet access (aka everyone using fiber access, excluding people believing in Russian dolls). If they know how to spell security, they might be nervous about connecting numerous customers to the same multi-access network, but it seems they have only two ways to solve this challenge:

- Use private VLANs with proxy ARP on the head-end router, forcing the customer-to-customer traffic to pass through layer-3 forwarding on the head-end router.

- Use a separate routed interface with each customer, wasting three-quarters of their available IPv4 address space.

Is there a third option? Can’t we pretend Ethernet works in almost the same way as dialup and use unnumbered IPv4 interfaces?

Single-Metric Unequal-Cost Multipathing Is Hard

A while ago, we discussed whether unequal-cost multipathing (UCMP) makes sense (TL&DR: rarely), and whether we could implement it in link-state routing protocols (TL&DR: yes). Even though we could modify OSPF or IS-IS to support UCMP, and Cisco IOS XR even implemented those changes (they are not exactly widely used), the results are… suboptimal.

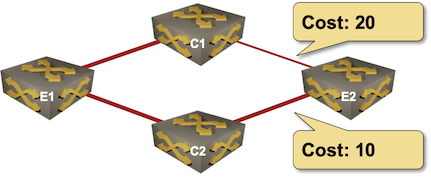

Imagine a simple network with four nodes, three equal-bandwidth links, and a link that has half the bandwidth of the other three: