… updated on Wednesday, November 18, 2020 15:42 UTC

Management, Control, and Data Planes in Network Devices and Systems

Every single network device (or a distributed system like QFabric) has to perform at least three distinct activities:

- Process the transit traffic (that’s why we buy them) in the data plane;

- Figure out what’s going on around it with the control plane protocols;

- Interact with its owner (or NMS) through the management plane.

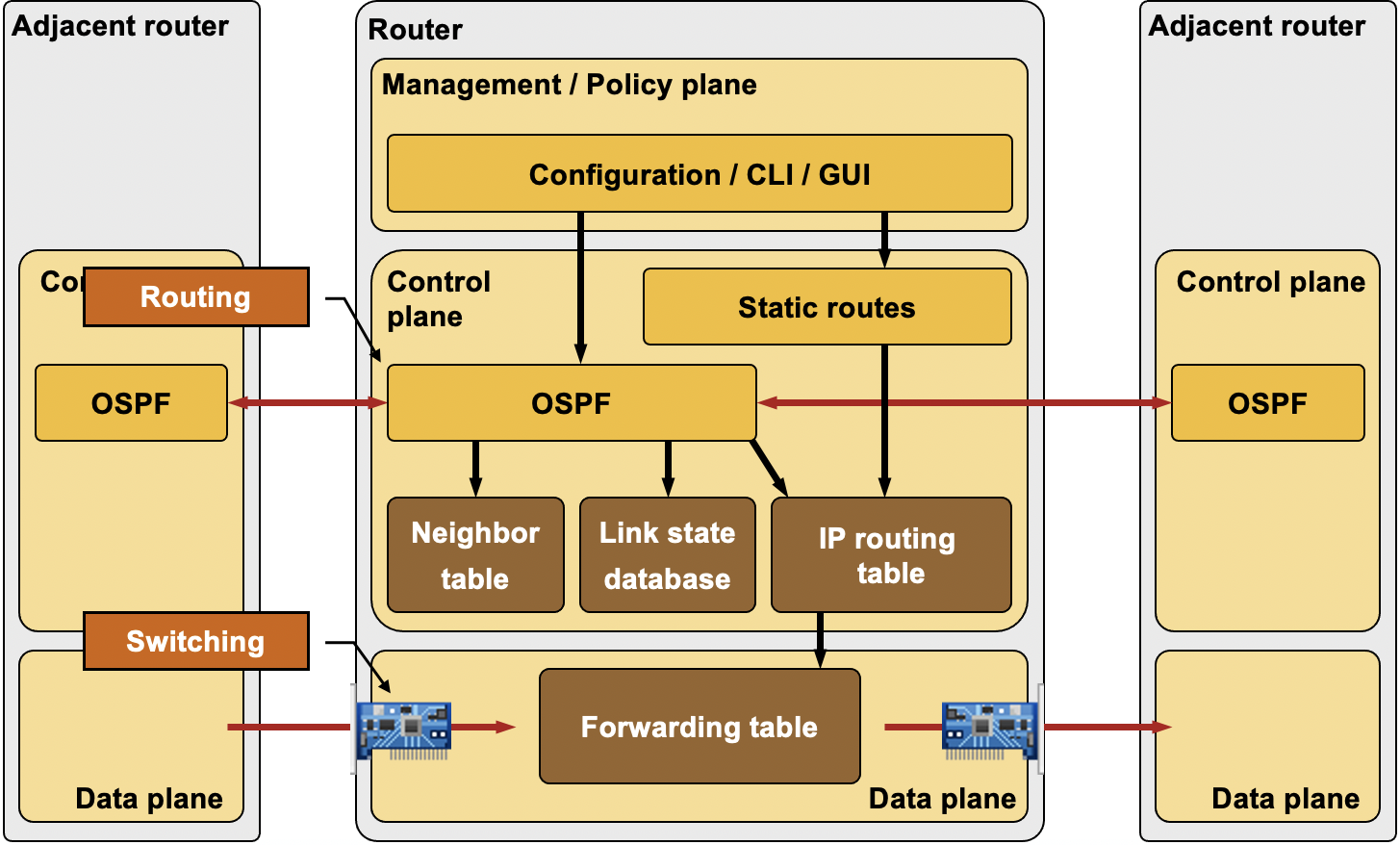

Routers are used as a typical example in every text describing the three planes of operation, so let’s stick to this time-honored tradition:

- Interfaces, IP subnets and routing protocols are configured through management plane protocols, ranging from CLI to NETCONF and the latest buzzword – northbound RESTful API;

- Router runs control plane routing protocols (OSPF, EIGRP, BGP …) to discover adjacent devices and the overall network topology (or reachability information in case of distance/path vector protocols);

- Router inserts the results of the control-plane protocols into Routing Information Base (RIB) and Forwarding Information Base (FIB). Data plane software or ASICs uses FIB structures to forward the transit traffic.

- Management plane protocols like SNMP can be used to monitor the device operation, its performance, interface counters …

Management, Control, and Data Planes in a Router

The management plane is pretty straightforward, so let’s focus on a few intricacies of the control and data planes.

We usually have routing protocols in mind when talking about Control plane protocols, but in reality the control plane protocols perform numerous other functions including:

- Interface state management (PPP, LACP);

- Connectivity management (BFD, CFM);

- Adjacent device discovery (hello mechanisms present in most routing protocols, ES-IS, ARP, IPv6 ND, uPNP SSDP);

- Topology or reachability information exchange (IP/IPv6 routing protocols, IS-IS in TRILL/SPB, STP);

- Service provisioning (RSVP for IntServ or MPLS/TE, uPNP SOAP calls);

Data plane should be focused on forwarding packets but is commonly burdened by other activities:

- NAT session creation and NAT table maintenance;

- Neighbor address gleaning (example: dynamic MAC address learning in bridging, IPv6 SAVI);

- Netflow Accounting (sFlow is cheap compared to Netflow);

- ACL logging;

- Error signaling (ICMP).

Data plane forwarding is hopefully performed in dedicated hardware or in high-speed code (within the interrupt handler on low-end Cisco IOS routers), while the overhead activities usually happen on the device CPU, often in userspace processes – -the switch from high-speed forwarding to user-mode processing is commonly called punting.

Typical Architectures

The management and control planes are typically implemented in a CPU, while the data plane could be implemented in numerous ways:

- Optimized code running on the same CPU;

- Code running on a dedicated CPU core (typical for high-speed packet switching on Linux servers);

- Code running on linecard CPUs (example: Cisco 7200);

- Dedicated processors (sometimes called NPUs)

- Switching hardware (ASICs);

- Switching hardware on numerous linecards.

Based on the capabilities of the switching hardware, a device might run some simple time-critical control-plane protocols (example: BFD) in hardware or on NPUs.

Processing of Inbound Control-Plane Packets

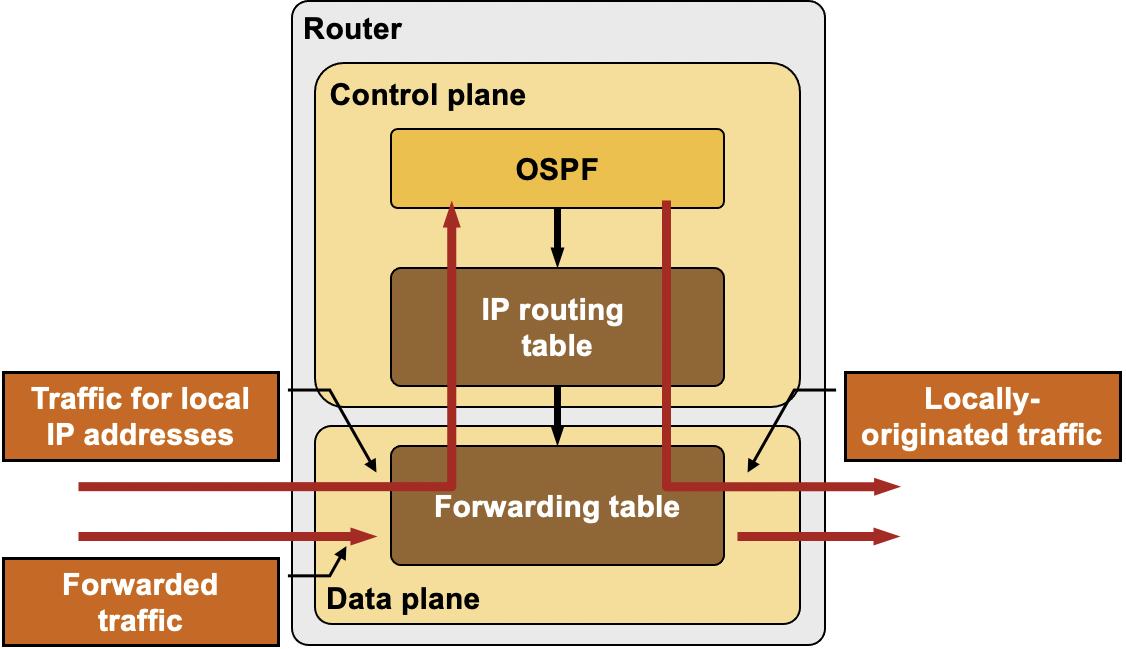

In most router implementations, the data plane receives and processes all inbound packets and selective forwards packets destined for the router (for example, SSH traffic or routing protocol updates) or packets that need special processing (for example, IP datagrams with IP options or IP datagrams that have exceeded their TTL) to the control plane.

The control plane might pass outbound packets to the data plane, or use its own forwarding mechanisms to determine the outgoing interface and the next-hop router (for example, when using the local policy routing).

Processing of Inbound and Outbound Control-Plane Packets

Regardless of the implementation details, it’s obvious the device CPU represents a significant bottleneck (in some cases the switch to CPU-based forwarding causes several magnitudes lower performance) – the main reason one has to rate-limit ACL logging and protect the device CPU with Control Plane Protection features.

Also, if I would be a device vendor, I wouldn't allow you to filter out my backdoor triggers with control plane protection, would I? ;)

(* For everyone else: this is PURE UNFOUNDED SPECULATION *)

Also, what about ICMPv6?

Even though punted traffic gets limited by what some vendors call CoPP, it doesn't transform punted traffic into control-plane protocols.

In my mind i visualize the idea of control-plane as a layer in the network device, where various processes run and these processes handle everything destined to the router itself. Maybe routing protocols have the control-plane functionality built-in, but still there are cases where for intermediate devices they are simple data-plane traffic.

After all C=Control in ICMP.

Quoting from yourself (http://wiki.nil.com/Control_and_Data_plane):

In most router implementations, the data plane receives and processes all inbound packets and selective forwards packets destined for the router (for example, routing protocol updates) or packets that need special processing (for example, IP datagrams with IP options or IP datagrams that have exceeded their TTL) to the control plane.

(disclaimer: I am by no means any expert on SDN)

If separating the control plane and the data plane, what's the best OS for the control plane and what for data plane: Microsoft Windows, Ubuntu, Red Hat Enterprise Linux or variant, FreeBSD or variant, or NetBSD or variant? Ever heard of IX? That would make a great data plane OS while a Linux distribution be for the control plane. See https://www.usenix.org/system/files/conference/osdi14/osdi14-paper-belay.pdf and https://courses.cs.washington.edu/courses/cse551/15sp/papers/ix-osdi14.pdf. Should Windows be for the management plane?

Most recently-developed solutions I've seen use one of the Linux distributions for the control plane.

Never heard of IX (thanks for the links), so I cannot say whether it ever progressed beyond an interesting academic concept.

Actually, Arrakis is based on Barrelfish and IX is based on Linux. They are counterparts.