Link Aggregation with Stackable Data Center Top-of-Rack Switches

Tomas Kubica made an interesting comment to my Stackable Data Center Switches blog post: “Suppose all your servers have 4x 10G port and you bundle them to LACP NIC team [...] With this stacking link is not going to be used for your inter-server traffic if all servers have active connections to all nodes of your ToR stack.” While he’s technically correct, the idea of having four 10GE ports on each server just to cater to the whims of stackable switches is somewhat hard to sell.

However, his comment made me think about the link aggregation behavior between the ToR switches and core (or spine) switches.

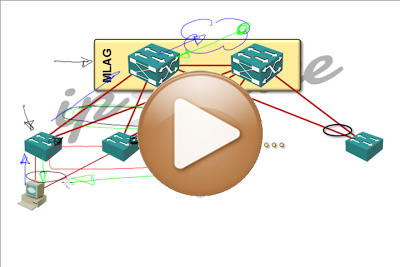

I was too tired of writing to write another blog post and decided to produce a short video (I was experimenting with a new mike, so the audio quality is not as good as usual).

If you like the video, don’t forget to subscribe to my podcast.

And here’s the summary for differently-attentive:

- All stackable technologies that present multiple ToR switches as a single LACP/STP/routing entity have the same problem. These technologies include Juniper’s Virtual Chassis, HP’s IRF and Brocade’s VCS fabric.

- There must be a single port channel between the whole stack and the spine layer, otherwise STP blocks some of the uplinks.

- Flow of northbound traffic is usually optimal.

- Southbound traffic (toward the servers) will arrive to wrong ToR switch with probability of (N-1)/N where N = number of ToR switches in a stack.

- All misdirected southbound traffic will traverse intra-stack links (see the original blog post for details).

- Worst-case scenarios include iSCSI arrays or database servers reachable through the spine layer.

More information

You probably know all this by now, but here it is for the newcomers (warmly welcome!):

- You’ll find numerous fabric designs guidelines in the Clos Fabrics Explained webinar.

- Port densities and fabric behavior of almost all data center switches available from nine major vendors are described in the Data Center Fabrics webinar.

Both webinars are available as part of the yearly subscription and you can always ask me for a second opinion or a design review.

Tomas

Tomas

not sure if that's been fixed in the past two years on the various product lines though. just something to think about.

Tomas