Virtual Firewall Taxonomy

Based on readers’ comments and recent discussions with fellow packet pushers, it seems the marketing departments and industry press managed to thoroughly muddy the virtualized security waters. Trying to fix that, here’s my attempt at virtual firewall taxonomy.

Virtual versus physical

While numerous vendors sell dedicated firewall hardware (clearly physical firewalls), most of those boxes have an x86 chipset inside. Some of them might have dedicated forwarding/filtering hardware but the moment you start looking deeply into the packets, the only hardware offload you can do is TCP checksuming and segmentation/reassembly.

Then there are software-only vendors that expect you to buy an off-the-shelf server and operating system platform on which you’ll run their software.

Finally, companies like Linerate Systems have multi-tenant firewalls running on commodity x86 hardware.

All the above-mentioned products are physical firewalls because regardless of how they’re implemented, there is no other software running on the same platform. You can have endless debates whether a firewall running on a dedicated operating system is safer than the one running on top of Linux, but please keep them to yourself.

A virtual firewall, on the other hand, is software running as a hypervisor kernel module or in a VM on top of a general-purpose hypervisor. The fundamental difference between a virtual and a physical firewall is that the virtual one shares compute, networking and storage resources with other (potentially malicious) VMs.

Chris Hoff wrote a great blog post describing the security aspects of virtual and physical firewalls, so I’ll not go down that particular winding path. Let’s just say that what matters most when dealing with this particular can of worms is the religious persuasion of your security auditor.

And now for the real stuff ...

Transparent or routed?

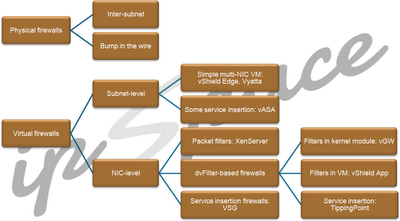

Physical firewall functionality comes in two flavors:

- Inter-subnet (inter-VLAN) firewalls that perform packet filtering and IP routing.

- Transparent (bump-in-the-wire) firewalls that perform packet filtering and layer-2 forwarding. In their simplest incarnation, they have two interfaces and send accepted traffic received on the inside interface to the outside interface and vice versa.

Virtual firewalls also come in two flavors: inter-VLAN (routed) firewalls and NIC-level firewalls, which are attached directly in front of a VM NIC, filtering traffic being sent from and received by individual VMs.

I haven’t seen a bump-in-the-wire inter-VLAN virtual firewall yet, but should I stumble upon one I wouldn’t be surprised; the vendors tend to explore all the nooks in the solution space.

Inter-VLAN virtual firewalls

These firewalls typically run in a multi-vNIC VM (with every virtual NIC being assigned to a different security zone).

In the vanilla inter-VLAN virtual firewall category we have vShield Edge and Vyatta firewalls (and other products with kitschy UI in front of Linux iptables). Cisco’s vASA is a tiny bit smarter; it can do vPath service chaining in VLANs where it’s deployed together with VSG.

These firewalls can work over any virtual networking technology – apart from vASA (which relies on vPath) they’re not aware of the technology used to implement virtual networking – all they know is that someone connected their virtual NICs to different port groups (to use the vSphere lingo).

NIC-level firewalls

This is an interesting new category of firewalls – they are (logically) sitting in front of individual VMs, inspecting all the VM traffic (like physical firewalls, subnet-level virtual firewalls inspect only the traffic entering and leaving a virtual network segment).

The simplest product in this category is XenServer vSwitch Controller, which uses OpenFlow to download static ACLs to Open vSwitch residing in Xen hypervisor.

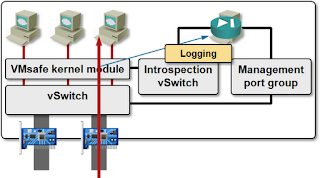

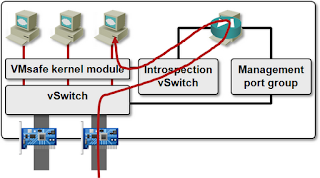

Most other NIC-level firewalls rely on VMware’s VMsafe Network Security API (lovingly known as dvFilter) – a kernel module intercepts vNIC traffic and processes it within the kernel module or within a VM running in the same vSphere host.

Juniper’s vGW is a dvFilter-based firewalls that does all the filtering in the kernel module (the VM that still has to be running in every vSphere host is doing only configuration, management and logging tasks).

vSphere App/Zones is another dvFilter-based firewall, but it does the actual filtering in the VM, so all the inspected traffic has to traverse the firewall VM, resulting in significantly reduced performance as compared with vGW.

Finally, there HP’s TippingPoint that uses a dvFilter-based solution to intercept the VM traffic and send it to an external TippingPoint IDS/IPS appliance.

Cisco’s VSG is slightly different – it uses a VM for traffic inspection, but doesn’t require a firewall VM to be running in each hypervisor host. VSG uses service insertion (vPath) implemented in the Nexus 1000V switches to get access to VM traffic. It can also insert shortcuts in the Nexus 1000V switches, resulting in higher performance.

Summary

A picture is supposedly worth more than a thousand words. Here it is:

More information

Check out my virtualization webinars (all of them are included in the yearly subscription).

This is also another meaning for virtual firewalls.

You said: "the moment you start looking deeply into the packets, the only hardware offload you can do is TCP checksuming and segmentation/reassembly"

Can you take a look to Palo Alto Networks technologies and make some comments on that matter?

If this is a generic "that might be interesting" type of comment - thank you, will put it on my to-investigate list (but that list is long and getting longer).

So yes, I think Palo Alto is pretty interesting :). Since I only know the comments on Palo Alto by my colleagues, I am also interested in what you think about them.

Btw the guy starting the company (Nir Zuk) started Netscreen before (now part of Juniper), AFAIK they did the first implementation of stateful packet inspection in hardware. I have worked with Netscreen boxes before, and I like them, so I am probably a little bit biased.

Both Juniper IPS and many parts of Fortinet seem similar to Netscreen of old.

That is, they say they are doing stateful inspection and application recognition on hardware (FPGAs), and I only wanted to know your opinion, related to your previous statement.

Put it to your list. It may be great reading a post about them.

Regards!

They failed because.

-No IPV6 hardware acceleration and broken IPV6 if over 50% CPU ( will get fixed in 5.0)

-Very high cpu usage if you have alot of HTTP traffic.

We had about 65% cpu usage for 1 gbps of traffic.

-Support didnt work. It took them over 5 weeks to identify a problem with Syn Cookies. Over 50% cpu and syn cookies enabled would result in packet drops.

This is just the list with the major issues we had.

The Palo Alto platform is not wirespeed for sure. If somebody wants all the data please contact me.

So right now we are running FortiNET 3950B in a PoC with 3 XH0 Securti engiens.

FortiNET actually do alot of IPS in ASICS or FPGA.

We are only seeing 6% CPU with the same amount of traffic.

The Palo Alto gui feels very modern compared to the FortiNET but the engineering is not very solid compared to FortiNET.

vShield Edge: FTP

vShield App: FTP, Microsoft RPC, Linux RPC, Sun RPC, Oracle TNS

Cisco VSG: FTP, RSH, and TFTP

Shouldn't intra-tenant security have more protocol awareness than a perimeter firewall? E.g., infrastructure protocols (NFS, CIFS/SMB, DNS, LDAP, MS RPC with UUID discrimination), application protocols (HTTP, Oracle, SIP, SMTP, RTSP), and the app data (SQL, SOAP, XML). Now, this veers dangerously into WAF and IPS territory but, IMHO, what's the point of doing intra-tenant security without understanding the apps?

vShield App might have a nice GUI, but it's actual security protection is no better than L4 ACL's on the free Nexus 1000v combined with the firewall built-in to every modern OS. If it was my security budget, I'd start with that, add a solid perimeter firewall, and then throw every remaining dollar at badass IPS and WAF vendors -- SourceFire, Imperva, TippingPoint, etc.