Forwarding State Abstraction with Tunneling and Labeling

Yesterday I described how the limited flow setup rates offered by most commercially-available switches force the developers of production-grade OpenFlow controllers to drop the microflow ideas and focus on state abstraction (people living in a dreamland usually go in a totally opposite direction). Before going into OpenFlow-specific details, let’s review the existing forwarding state abstraction technologies.

A Mostly Theoretical Detour

Most forwarding state abstraction solutions that I’m aware of (and I’m positive Petr Lapukhov will give me tons of useful pointers to a completely different universe) use a variant of Forwarding Equivalence Class (FEC) concept from MPLS:

- All the traffic that expects the same forwarding behavior gets the same label;

- The intermediate nodes no longer have to inspect the individual packet/frame headers; they forward the traffic solely based on the FEC indicated by the label.

The grouping/labeling operation thus greatly reduces the forwarding state in the core nodes (you can call them P-routers, backbone bridges, or whatever other terminology you prefer) and improves the core network convergence due to significantly reduced number of forwarding entries in the core nodes.

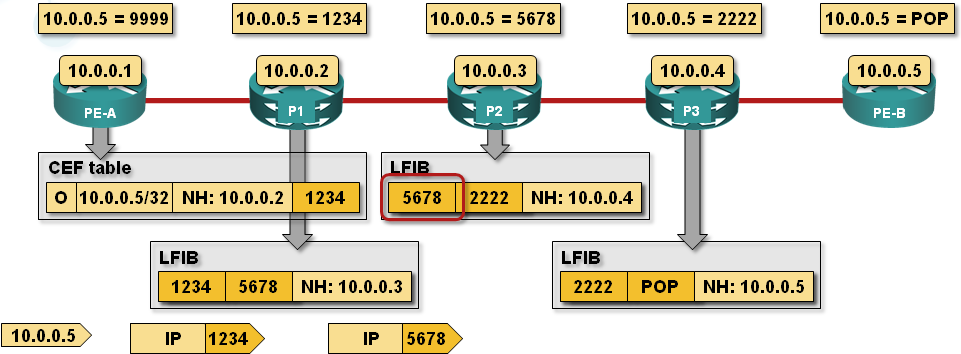

MPLS forwarding diagram from the Enterprise MPLS/VPN Deployment webinar

The core network convergence is improved due to reduced state not due to pre-computed alternate paths that Prefix-Independent Convergence or MPLS Fast Reroute uses.

From Theory to Practice

There are two well-known techniques you can use to transport traffic grouped in a FEC across the network core: tunneling and virtual circuits (or Label Switched Paths if you want to use non-ITU terminology).

When you use tunneling, the FEC is the tunnel endpoint – all traffic going to the same tunnel egress node uses the same tunnel destination address.

All sorts of tunneling mechanisms have been proposed to scale layer-2 broadcast domains and virtualized networks (IP-based layer-3 networks scale way better by design):

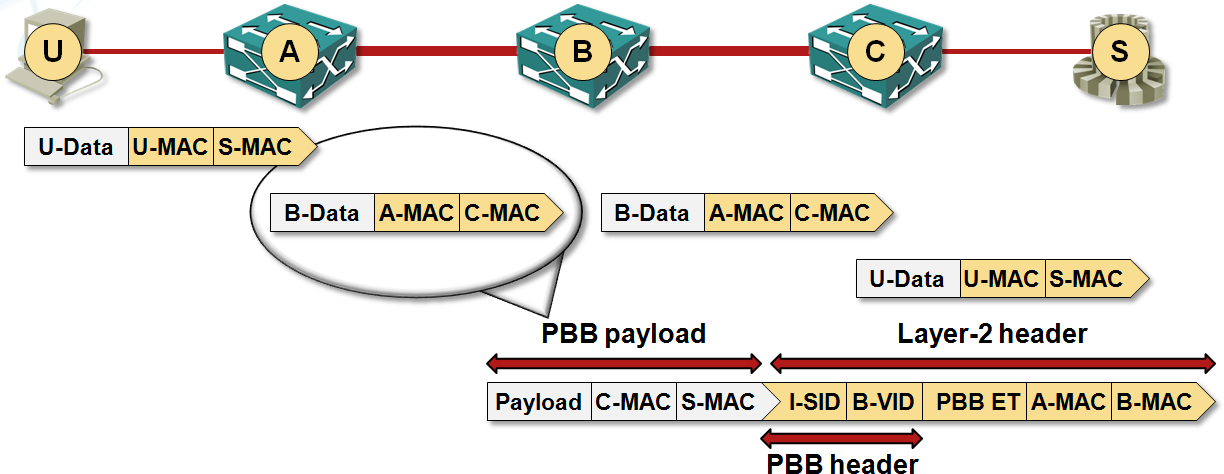

- Provider Backbone Bridges (PBB – 802.1ah), Shortest Path Bridging-MAC (SPBM – 802.1aq) and vCDNI use MAC-in-MAC tunneling – the destination MAC address used to forward user traffic across the network core is the egress bridge or the destination physical server (for vCDNI).

SPBM forwarding diagram from the Data Center 3.0 for Networking Engineers webinar

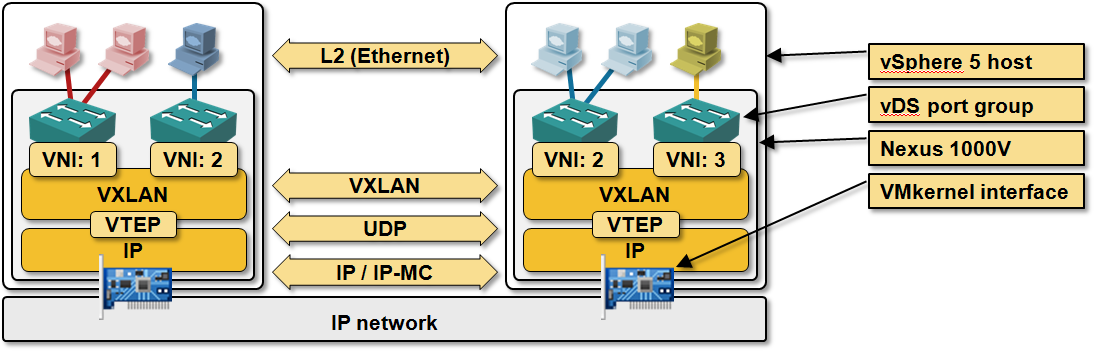

- VXLAN, NVGRE and GRE (used by Open vSwitch) use MAC-over-IP tunneling, which scales way better than MAC-over-MAC tunneling because the core switches can do another layer of state abstraction (subnet-based forwarding and IP prefix aggregation).

Typical VXLAN architecture - from the Introduction to Virtual Networking webinar

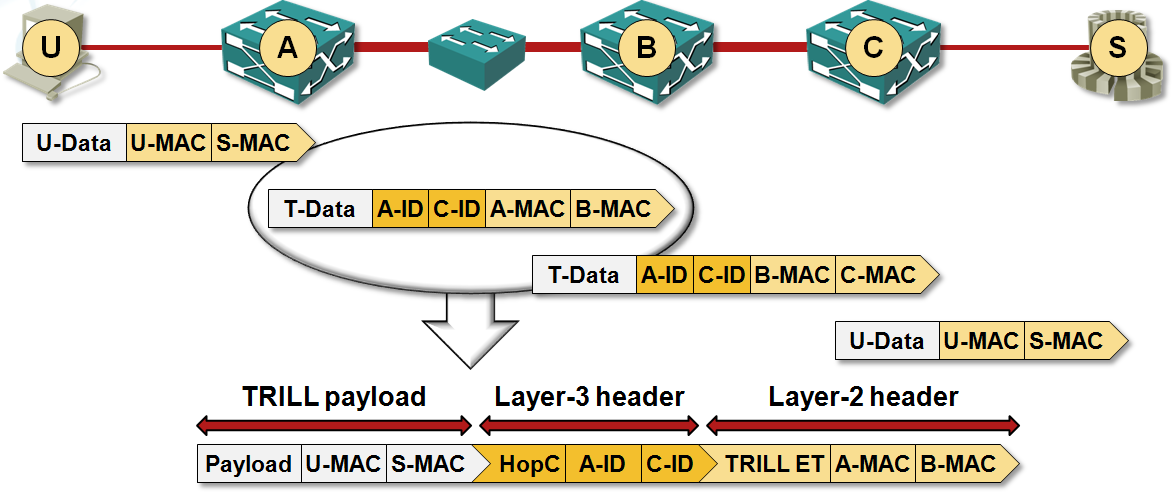

- TRILL is closer to VXLAN/NVGRE than to SPB/vCDNI as it uses full L3 tunneling between TRILL endpoints with L3 forwarding inside RBridges and L2 forwarding between RBridges.

TRILL forwarding diagram from the Data Center 3.0 for Networking Engineers webinar

With tagging or labeling a short tag is attached in front of the data (ATM VPI/VCI, MPLS label stack on point-to-point links) or somewhere in the header (VLAN tags) instead of encapsulating the user’s data into a full L2/L3 header. The core network devices perform packet/frame forwarding based exclusively on the tags. That’s how SPBV, MPLS and ATM work.

MPLS-over-Ethernet frame format from the Enterprise MPLS/VPN Deployment webinar

MPLS-over-Ethernet commonly used in today’s high-speed networks is an abomination as it uses both L2 tunneling between adjacent LSRs and labeling ... but that’s what you get when you have to reuse existing hardware to support new technologies.

Next: Virtual Circuits in OpenFlow 1.0 World Continue

Revision History

- 2022-02-16

- Cleaned up the blog post and added a note listing the obsolete technologies.

1 comments: