Category: WAN

Coping with long-distance vMotion requests

During the last Data Center webinar I got an interesting question when describing the inherent problems of long-distance vMotion: “OK, I understand all the implications, but how do I persuade my server admins?”

The best answer I’ve heard so far came from an old battle-hardened networking guru: “Well, let them try”.

Data Center Interconnect (DCI) encryption

Brad sent me an interesting DCI encryption question a while ago. Our discussion started with:

We have a pair of 10GbE links between our data centers. We talked to a hardware encryption vendor who told us our L3 EIGRP DCI could not be used and we would have to convert it to a pure Layer 2 link. This doesn't make sense to me as our hand-off into the carrier network is 10GbE; couldn't we just insert the Ethernet encryptor as a "transparent" device connected to our routed port ?

The whole thing obviously started as a layering confusion. Brad is routing traffic between his data centers (the long-distance vMotion demon hasn’t visited his server admins yet), so he’s talking about L3 DCI.

The encryptor vendor has a different perspective and sent him the following requirements:

BFD Has Reached RFC Status

Bidirectional Forwarding Detection (BFD) protocol has finally been published as a series of RFCs. BFD gives you quick failure detection between L3 hops (routers) regardless of the underlying technology and equipment (modems, media converters, bridges). It’s been gradually introduced in Cisco IOS during the last few years; release 15.0M and 12.2SRE contain almost everything you’ll ever need (missing: multihop BGP support and MPLS LSP support).

I wrote about BFD in Improve the Convergence of Mission-Critical Networks with Bidirectional Forwarding Detection (BFD) article (you’ll find it somewhere in this list). To learn more, read the RFCs in this order:

Traffic grooming in optical networks

SearchTelecom has just published my new article: Traffic grooming in optical networks: Making real-world choices. If you’re new to the optical networking (or if you’ve been bedazzled by vendors’ marketing departments), you’ll probably find it a useful introductory text.

SearchTelecom has just published my new article: Traffic grooming in optical networks: Making real-world choices. If you’re new to the optical networking (or if you’ve been bedazzled by vendors’ marketing departments), you’ll probably find it a useful introductory text.

As the production-grade all-optical traffic grooming solutions don’t exist yet, the only short answer anyone (apart from people trying to sell you specific gear) can give you on this topic is “it depends”. You have to evaluate your needs, do several alternative designs, and find the design that best fits your needs, your budget and your long-term cost expectations.

L2TP: The revenge of hardware switching

Do you like the solutions to the L2TP default routing problem? If you do, the ASR 1000 definitely doesn’t share your opinion; so far it’s impossible to configure a working combination of L2TP, IPSec (described in the original post) and PBR or VRFs:

PBR on virtual templates: doesn’t work.

Virtual template interface in a VRF: IPSec termination in a VRF doesn’t work.

L2TP interface in a VRF: This one was closest to working. In some software releases IPSec started, but the L2TP code was not (fully?) VRF-aware, so the LNS-to-LAC packets used global routing table. In other software releases IPSec would not start.

L2TP default routing: solutions

There are three tools that can (according to a CCIE friend of mine) solve any networking-related problems: GRE tunnels, PBR and VRFs. The solutions to the L2TP default routing challenge nicely prove this hypothesis; most of them use at least one of those tools.

Policy-based routing on virtual template interface. Use the default route toward the Internet and configure PBR with set default next-hop on the virtual template interface. The PBR is inherited by all virtual access interfaces, ensuring that the traffic from remote sites always passes the network core (and the firewall, if needed).

Small Steps to Large Complexity

Imagine you have a large retail network: your remote offices use ISDN to dial into the central site and upload/download whatever periodic reports they have. Having a core router connected to an ISDN PRI interface is the perfect solution:

A few years later, ISDN is becoming too slow for your increased traffic needs and you want to replace it with DSL or VPN-over-Internet solution. Your Service Provider offers you PPPoE forwarding with L2TP. This is a perfect solution as it allows you to minimize the changes:

IP over DWDM

A while ago I was pushed (actually it was a kind nudge) into another interesting technology area: optical networks, with emphasis on IP over DWDM. I realized almost immediately that I’d encountered another huge can of worms. A few issues you can stumble across when trying to plug routers or switches directly into DWDM networks are documented in my IP over dense wave division multiplexing (DWDM): Metro and core issues article recently published by SearchTelecom.com.

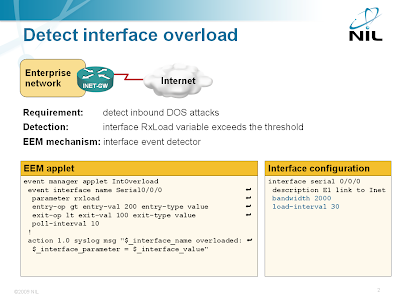

Detect DoS Attacks with EEM

Someone sent me an interesting question a while ago: “is it possible to detect DOS flooding with an EEM applet?” Of course it is (assuming the DOS attack results in very high load on the Internet-facing interface) and the best option is the EEM interface event detector.

Detecting interface overload with EEM

The interface event detector is more user-friendly than the SNMP event detector. You can specify interface name and parameter name in the interface event detector; with SNMP event detector you have to specify SNMP object identifier (OID). The interface event detector stores the interface name, measured parameter name and its value in three convenient environment variables that you can use to generate syslog messages or alert the operators via e-mail.

What went wrong: end-to-end ATM

Red Pineapple was kind enough to share his 15-year-old ATM slides. They include interesting claims like:

ATM has the potential to displace all existing internetworking technologies

One single network handles all traffic types: Bursty data and Time-sensitive continuous traffic (voice/video).

All these claims are still true if you just replace »ATM« with »IP«. So what went wrong with ATM (and why did the underdog IP win)? I can see the following major issues:

- ATM is a layer-2 technology that wanted to replace all other layer-2 technologies. Sometimes it made sense (ADSL), sometimes not so much (LAN … not to mention LANE). IP is a layer-3 technology that embraced all layer-2 technologies and unified them into a single network.

- ATM is an end-to-end circuit-oriented technology, which made perfect sense in a world where a single session (voice call, terminal session to mainframes) lasted for minutes or hours and therefore the cost of session setup became negligible. In a Web 2.0 world where each host opens tens of sessions per minute to servers all across the globe, the session setup costs would be prohibitive.

- Because of its circuit-oriented nature, ATM causes per-session overhead in each node in the network. Core IP routers don’t have to keep the session state as they forward individual IP datagrams independently. IP is thus inherently more scalable than ATM.

The shift that really made ATM obsolete was the changing data networking landscape: voice and long-lived low-bandwidth data sessions which dominated the world at the time when ATM was designed were dwarfed by the short-lived bursty high-bandwidth web requests. ATM was (in the end) a perfect solution to the wrong problem.