Public Videos: OpenFlow Deep Dive

Remember OpenFlow, the One Protocol to Bind Them All1? I haven’t heard anyone even mention it in ages, and I never bothered to ask whether anyone is still using it after the dismal results of the 2022 poll.

Anyway, if you still have to deal with that ancient blunder, six hours of deep dive videos I recorded a decade ago might still be useful. You can watch them without an ipSpace.net account.

Looking for more binge-watching materials? You’ll find them here.

Dual-Stack SR-MPLS

After the introduction to SR-MPLS demo I did during the Segment Routing workshop @ ITNOG10, we moved to dual-stack SR-MPLS – can we assign node segment identifiers (SIDs) to IPv4 and IPv6 prefixes? The demo used the same three-router network as the previous one, with IPv4 SIDs starting at one and IPv6 SIDs starting at 101:

Worth Reading: Agentic AI Setup: Sandboxes and Worktrees

Most of the hyperventilated AI “success stories” are as useful as the “ANSIBLE!!!” movement was a few years ago. It’s thus always a pleasure to find someone with well-established software development chops who took the time to describe what works for them.

One cannot argue with Mike McQuaid’s credentials (at least if you happen to be using homebrew on MacOS, which you REALLY SHOULD), and his Sandboxes and Worktrees: My secure Agentic AI Setup in 2026 article is full of relevant recommendations in case you’re brave enough to let AI agents loose on your GitHub repository.

netlab 26.05: BGP-free SRv6 Core, Junos Features

netlab release 26.05 is out. Here are the highlights:

- Support for global BGP routes with SRv6 next hops (inspired by proof-of-concept by @jvbemmel) on FRR and IOS XR

- Junos OSPF/IS-IS route redistribution, VRF IS-IS instances, and OSPF interface parameters

- Streamline and speed up the FortiOS initial device configuration by @a-v-popov

- Support for Juniper cSRX container by @leec-666

Goodbye, Ubuntu 20.04 (netlab 26.05)

netlab release 26.05 is out. I’ll write about its highlights tomorrow; today, I want to focus on one of its breaking changes: netlab no longer works with Python 3.8 (which reached end-of-life in October 2024), so you can no longer install it on a vanilla Ubuntu 20.04 (which reached end of standard support a year ago).

We wanted to get rid of old Python versions for ages, but never did because Ubuntu 20.04 shipped with Python 3.8, and many netlab early adopters installed it on Ubuntu 20.04 (and the last thing a networking engineer wants is wasting time with upgrades, right?).

Lab: EVPN Asymmetric IRB with Anycast Gateways

I postponed the discussion of ARP issues with EVPN anycast gateways to keep yesterday’s blog post reasonably short. If you’re impatient and want to try that out, I have just the right lab exercise for you; you’ll have to extend VLANs into end-to-end MAC-VRF instances and add IRB and anycast gateways:

You can run the lab on your own netlab-enabled infrastructure (more details), but also within a free GitHub Codespace or even on your Apple-silicon Mac (installation, using Arista cEOS container, using VXLAN/EVPN labs).

ARP with EVPN Asymmetric IRB

TL&DR: With the right nerd knob settings, it all works

In a previous blog post, I described the ARP issues you’ll encounter when using centralized routing (on a spine switch) between two EVPN MAC-VRF instances (a fancy name for a VLAN encapsulated in VXLAN or MPLS).

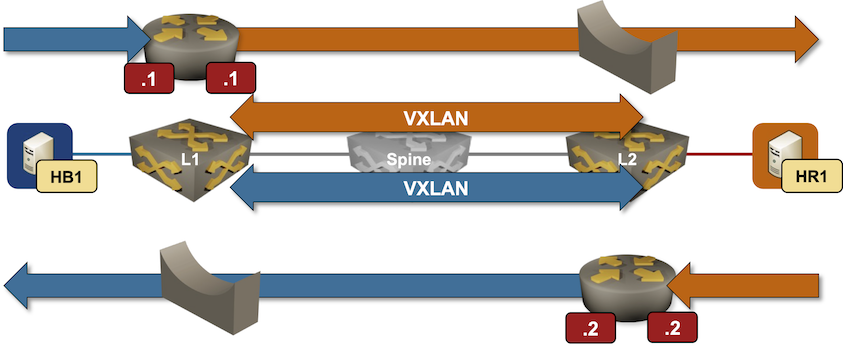

That blog post established a baseline that will help us unravel the ARP behavior in a more realistic scenario: asymmetric Integrated Routing and Bridging (IRB). That’s a mouthful, but it’s really quite a simple concept; the following diagram explains the asymmetric forwarding behavior:

Packet forwarding in an EVPN asymmetric IRB design

Hands-On Introduction to SR-MPLS

The second demo1 I did during the Segment Routing workshop @ ITNOG10 illustrated how easy it is to set up and explore a small SR-MPLS network with netlab. The lab topology described a small three-router network (you need three routers to see “true” labels besides the penultimate-hop popping ones):

MUST READ: The Future of Everything is Lies, I Guess

Kyle Kingsbury published a long (10-part) article about his frustrations with AI, aptly named The Future of Everything is Lies, I Guess.

Regardless of where you are on the skeptic-to-fanboy spectrum, I would highly recommend you read it, even if you believe you’ll disagree with everything he wrote.

Reorganized ipSpace.net Segment Routing Resources

I created nine sample SR-MPLS topologies for the ITNOG 10 SR-MPLS workshop, and of course, we ran out of time. I plan to cover those topologies and resulting printouts in a series of blog posts; to prepare for those, I cleaned up and reorganized the Segment Routing blog category, which is now split into two:

Hope you’ll find them useful! Also, if you know of other non-vendor Segment Routing resources, please leave a comment, email me, or submit a pull request.