AWS Networking 101

There was an obvious invisible elephant in the virtual Cloud Field Day 7 (CFD7v) event I attended in late April 2020. Most everyone was talking about AWS, how their stuff runs on AWS, how it integrates with AWS, or how it will help others leapfrog AWS (yeah, sure…).

Although you REALLY SHOULD watch my AWS Networking webinar (or something equivalent) to understand what problems vendors like VMWare or Pensando are facing or solving, I’m pretty sure a lot of people think they can get away with CliffsNotes version of it, so here they are ;)

High-Level Perspective

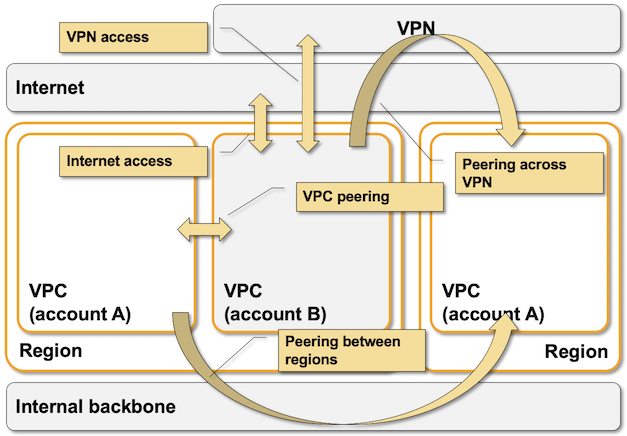

- AWS virtual networking is implemented with isolated Virtual Private Clouds (VPC) that can be connected to outside world through Internet gateway, VPC-to-VPC peering, VPN gateways, Transit Gateways, or Direct Connect (leased lines) gateways.

- VPC is limited to an AWS region.

- Every VPC has one or more subnets. Each subnet is limited to an AWS Availability zone

Some AWS VPC external connectivity options

VPC Packet Forwarding Overview

Even though AWS networking is just networking (as my friend Nicola Arnoldi wrote a few days ago), it’s different enough from what you’d expect to make you feel like Alice in Wonderland.

A few speculations first:

- AWS is using an overlay virtual networking. We don’t know what encapsulation protocol (GRE, VXLAN, …) or what control plane they use… but we can be pretty sure it’s not a centralized control plane or EVPN because those wouldn’t scale to AWS size.

- We know they do VPC packet processing (checking, forwarding…) in ingress and egress hypervisors (source: AWS re:Invent video).

Now for a few hard facts (if you don’t trust me go and test them yourself):

- AWS VPC supports unicast IPv4 and IPv6 packet forwarding.

- IPv4 multicast is supported on Transit Gateway (I’m guessing they’re using transit gateway as a head-end replicator).

- VM IP and MAC addresses are controlled by the orchestration system.

- MAC address is passed to a VM as a (virtual) hardware parameter,

- DHCP is used to pass IPv4 and IPv6 addresses configured in the orchestration system to the VMs.

- Packets with destinations within the VPC address range are delivered directly from ingress vNIC (virtual Network Interface Card) to egress vNIC based on the IP addresses configured in the orchestration system

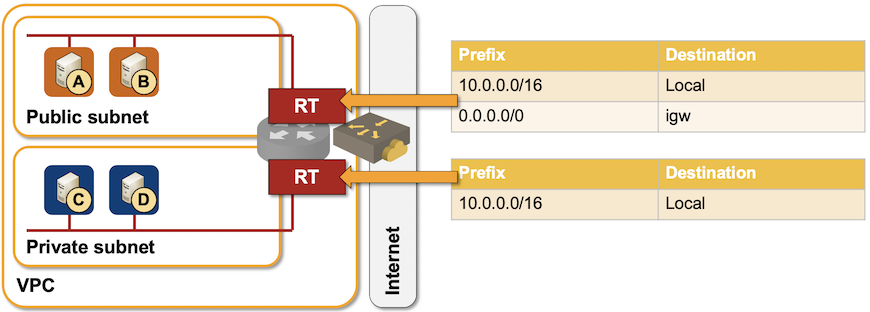

- Route tables are used to influence traffic forwarding toward external destinations. Next hops in route tables are AWS instances (VM, NIC, Internet gateway, VPN gateway, VPC peering…) not IP addresses.

- Even though the whole VPC behaves like a single forwarding domain (VRF), each subnet could have a different route table.

- A separate route table can be used for ingress VPC traffic to implement service insertion… but it only applies to traffic entering a VPC through an Internet- or VPN gateway.

- Route tables cannot contain prefixes within the VPC address range, the only exception being the ingress route table attached to a gateway.

- Route tables can be populated through the orchestration system or via external (VPN or DirectConnect) BGP sessions.

Sample AWS VPC route table scenario

Consequences:

- There’s no way you can influence intra-VPC packet delivery (apart from a gotcha explained below).

- Changing an IP or a MAC address in a VM usually results in a disconnected VM.

- First-hop routing protocols like HSRP or VRRP don’t work. Changing a MAC address of a VM will just disconnect it.

- The only way to pass an IP address from one VM to another is through an orchestration system call. The usual GARP tricks don’t work.

- Taking over an IP address of a failed instance doesn’t change packet forwarding behavior. To do a proper HA failover you have to either change the route table (through orchestration system) or move an elastic NIC (the next hop) to another VM instance.

- You cannot run a routing protocol between your instance and AWS VPC router. You could either use orchestration system to modify the subnet route table(s) based on VM instance route tables, or run BGP with a VPN gateway. Cisco used that approach with CSR1000v to implement hub-and-spoke VPC peering (and AWS took away that bonanza with Transit Gateway), and VMware still has to do that to connect multiple VMware-on-AWS instances together.

It’s Not That Simple

Even though the “common wisdom” is that AWS uses VPC router for packet forwarding, it really uses a mix of L2 and L3 forwarding:

- Within a subnet, packet forwarding is done on destination MAC address, but it is still unicast routing not transparent bridging. Layer-2 tricks like BUM flooding do not work.

- Across subnets, packet forwarding is done on destination IP address.

Consequences:

- ARP caches contain meaningful MAC addresses (unlike Azure)

- You can use static routes with intra-subnet next hops within VMs to change the VM egress traffic flow… but they have to be configured inside the VM and only work if the next hop is in the same subnet.

- The moment you want to send the traffic to another subnet that trick stops working, AWS VPC router takes over, and the traffic is delivered directly to destination vNIC.

- You could use a routing protocol between VMs in the same subnet, and use routes derived from the routing protocol for packet forwarding across the shared subnet… as long as the routing protocol uses only unicast IP traffic. BGP FTW ;)

Need more details? I already told you where to find them, and we covered numerous BYOA (Bring Your Own Appliance) designs in Networking in Public Cloud Deployments online course.

The Azure network design and virtual switch is fairly well documented in the papers [1] and [2], [4] azure stack in regards to the Network Controller. The VFP is also available in modern Windows system such as Windows 10 [3]. [1] VFP: A Virtual Switch Platform for Host SDN in the Public Cloud [2] VL2: A Scalable and Flexible Data Center Network. [3] VFP Filter Driver, c:\windows\system32\vfpctrl.exe [4] Explore Azure Stack HCI - Introduction, Management and Networking