The Intricacies of Optimal Layer-3 Forwarding

I must have confused a few readers with my blog posts describing Arista’s VARP and Enterasys’ Fabric Routing – I got plenty of questions along the lines of “how does it really work behind the scenes?” Let’s shed some light on those dirty details.

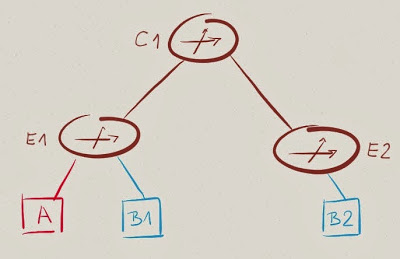

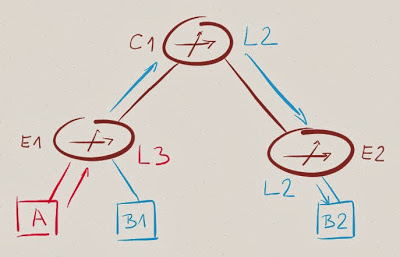

Sample Network

We’ll work with a small network having two edge switches (E1 and E2), a single core switch (C1) and hosts (A, B1 and B2) in two subnets (A and B):

Let’s assume we’ve configured some sort of optimal layer-3 forwarding in the network, so E1 and E2 do layer-3 forwarding (the activity formerly known as routing) between subnets A and B.

When A sends traffic to B1 or B2, E1 forwards the traffic into subnet B and when B2 sends traffic to A, E2 forwards the traffic into subnet A.

Who Does What?

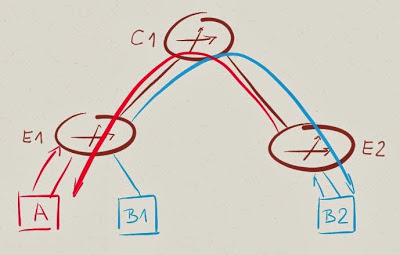

The real question we should be asking is: which switch (along the path E1-C1-E2, for example) does L2 forwarding (destination MAC address lookup) and which one does L3 forwarding (destination IP address lookup)?

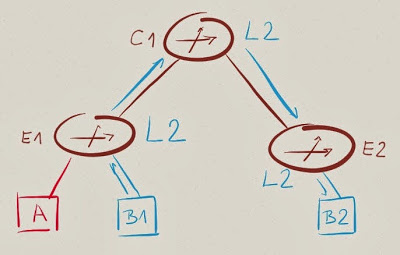

Intra-subnet example first: B1 sends IP traffic to B2. To do so, it must encapsulate the IP datagram into a MAC frame. What destination MAC address will B1 use?

The destination MAC address B1 uses is the address it got when sending ARP request for IP address B2 – usually the MAC address of B2. Packet from B1 to B2 is thus sent with destination MAC address MAC-B2, and the switches along the path (E1, C1, E2) perform L2 forwarding based on destination MAC address (MAC-B2). To make that work, E1, C1 and E2 must all belong to VLAN-B.

Now for inter-subnet example: A sends IP traffic to B2. A knows B2 is in a different subnet and uses its IP routing table to find the IP next hop. Hosts would usually have a default route pointing to a default gateway (GW-A – the VARP/VRRP IP address shared by E1 and E2) – A would thus send the IP packet toward GW-A using MAC address associated with GW-A. GW-A MAC address is shared between E1 and E2; E1 thus receives the packet and performs L3 forwarding.

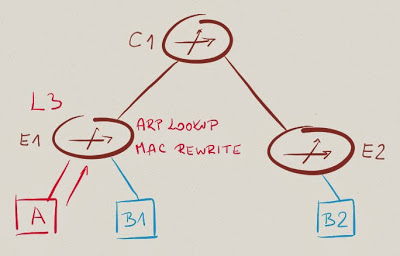

As part of the L3 forwarding process, E1 decrements IP TTL and rewrites the MAC header. The destination MAC address in the new MAC header is the MAC address associated with the new IP next hop – in our case B2 (remember: E1 forwarded IP packet from A into subnet B). Subsequent switches in the forwarding path (C1 and E2) have to perform L2 forwarding based on destination MAC address.

Conclusions: E1 must have an ARP entry for B2 (or it wouldn’t know the MAC address of B2) and L2 connectivity to B2 (or it wouldn’t be able to send traffic to B2’s MAC address).

E1 (ingress switch) thus performs L3 (inter-subnet) forwarding based on destination IP address, all the other switches in the forwarding path perform L2 (intra-subnet) forwarding based on destination MAC address. Every VLAN must span all edge and core switches.

Scalability Limitations of Optimal Layer-3 Forwarding

Now that we know all the dirty details, it’s easy to figure out the scalability limitations of optimal L3 forwarding:

- Every VLAN must span all edge and core switches in the routing domain. The whole routing domain thus becomes a single failure domain with well-known scalability issues;

- Every edge switch must know MAC addresses of all active IP hosts;

- Every edge switch must have ARP entries for all active IP hosts.

Furthermore, if you’re using traditional configuration mechanisms (with each edge switch being an independently configurable device), each edge switch needs an IP address in every subnet. With 50 switches in your network, you’d waste a fifth of each /24 IPv4 prefix just for the switch addresses (you do know that wouldn’t be an issue with IPv6, don’t you?).

More Dirty Details

You got the ARP entries detail from the previous section, didn’t you? Whenever you’re evaluating an architecture with optimal L3 forwarding (QFabric comes to mind), check the number of ARP entries (aka IPv4 host routes) supported by the edge switches. QFX3500 has 8000 ARP entries, as does QFX3600. Arista 7150 is way better with 64K next hop entries. Draw your own conclusions.

Need more information? You’ll find switch table sizes (and numerous other details) for most data center switches from 10 most popular vendors in the Data Center Fabric Architectures webinar.

QFabric probably has some sort of ARP distribution protocol (most likely yet another MP-BGP address family).

- I am not a fan of building large L2 networks, but even small L2 networks may need to be distributed. Size and distribution are not necessarily related.

- ARP and MAC address, yes, but with recent hardware those limits are fine for just about everyone. And there is a difference between having an address available on a switch versus actively installed in forwarding hardware

As for the IP addressing for optimized or distributed routing, it does not have to be that way. By sheer coincidence (honestly) my blog post for this coming Thursday touches on this very same topic and explains at a high level how Plexxi tackles distributed routing.

-Marten

@martent1999

Plexxi

nice summary :) In terms of scale and leveraging fabric routing you don't need L2 everywhere when you use our host routing, IP mobility feature (into foreign subnets). That is an option to avoid stretched, large L2 domains.

In terms of IP address consumption - that is true but if you have a enterprise data center and eventually a EoR design that number is still pretty low.

If you want to go beyond - I wrote a blog about another upcoming solution for that problem ... http://blogs.enterasys.com/routing-as-a-service-does-this-become-automatically-raas/

Regards

Markus

just did another deeper review - fabric routing would not require to

Every edge switch must know MAC addresses of all active IP hosts;

Every edge switch must have ARP entries for all active IP hosts.

That every edge switch needs to know MAC and ARP for the locally attached IP Hosts but as the switch then routes (based on his routing table) it to the destination network there is no need for him to know all of them

Regards.

It will probably have MAC addresses of all hosts (due to dynamic MAC learning), but not necessarily all ARP addresses. Would you agree?

Kind regards, Ivan