Redundant Data Center Internet Connectivity – Problem Overview

During one of my ExpertExpress consulting engagements I encountered an interesting challenge:

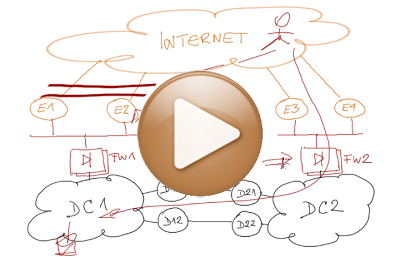

We have a network with two data centers (connected with a DCI link). How could we ensure the applications in a data center stay reachable even if all local Internet links fail?

On the face of it, the problem seems trivial; after all, you already have the DCI link in place, so what’s the big deal ... but we quickly figured out the problem is trickier than it seems.

In the following short video I’m trying to explain what the problem is, and what a potential solution might look like. You'll find more details here.

Related Blog Posts

Todd Hoff wrote a great in-depth commentary of this video that you absolutely have to read.

And here are a few other relevant blog posts:

- High-level design document in ipSpace solutions corner

- Distributed firewalls – how badly do you want to fail?

- Long-distance vMotion for disaster avoidance? Do the math first!

- Is layer-3 DCI safe?

- Stretched layer-2 subnets – the server engineer perspective

- Layer-2 DCI and the infinite wisdom of ACM Queue

If you had TCP timeout issues before, you'll still have issues during the Internet fails scenario.

I challenge the need of dual Internet connectivity per DC added by non-stretch firewalls (I have read the article of stretch firewalls).

The 2 Internet links failure should be as rarely as the 2 routers failure. And If the 2 routers fails, the stretch firewalls is the solution.

In the proposed design, I would still use the WAN backbone for private and public networks and achieve something close to load balance (lets leverage Load Balancers here ?) the traffic between both Internet link located in each DC with the use of a "stretch clustering Firewall"

As for "links fail as rarely as routers", I can only refer you to Section 2.4 of RFC 1925. You might also want to consider that a link usually traverses more than one device ;), not to mention the potential impact of "cable finders" (aka backhoes).

See also http://blog.ioshints.info/2012/10/if-something-can-fail-it-will.html

However, you do have to run pretty big data centers to need a dedicated "outside" link. In that case, it might be better to go for full-blown ISP design and do optimal IBGP routing on the outside as well.