Category: Vxlan

netlab VXLAN Router-on-a-Stick Example

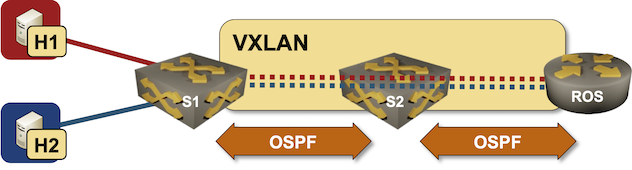

In October 2022 I described how you could build a VLAN router-on-a-stick topology with netlab. With the new features added in netlab release 1.41 we can do the same for VXLAN-enabled VLANs – we’ll build a lab where a router-on-a-stick will do VXLAN-to-VXLAN routing.

Lab topology

… updated on Thursday, November 10, 2022 07:58 UTC

Using EVPN/VXLAN with MLAG Clusters

There’s no better way to start this blog post than with a widespread myth: we don’t need MLAG now that most vendors have implemented EVPN multihoming.

TL&DR: This myth is close to the not even wrong category.

As we discussed in the MLAG System Overview blog post, every MLAG implementation needs at least three functional components:

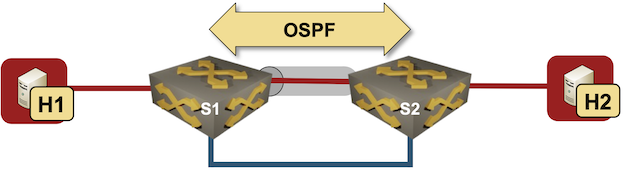

Use VRFs for VXLAN-Enabled VLANs

I started one of my VXLAN tests with a simple setup – two switches connecting two hosts over a VXLAN-enabled (gray tunnel) red VLAN. The switches are connected with a single blue link.

Test lab

I configured VLANs and VXLANs, and started OSPF on S1 and S2 to get connectivity between their loopback interfaces. Here’s the configuration of one of the Arista cEOS switches:

Worth Reading: VXLAN Drops Large Packets

Ian Nightingale published an interesting story of connectivity problems he had in a VXLAN-based campus network. TL&DR: it’s always the MTU (unless it’s DNS or BGP).

The really fun part: even though large L2 segments might have magical properties (according to vendor fluff), there’s no host-to-network communication in transparent bridging, so there’s absolutely no way that the ingress VTEP could tell the host that the packet is too big. In a layer-3 network you have at least a fighting chance…

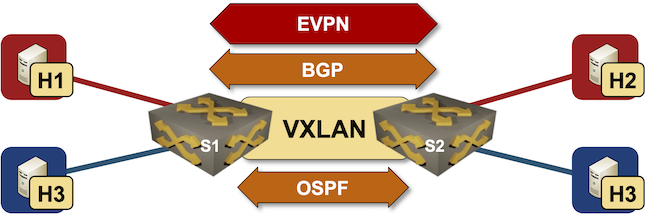

netlab EVPN/VXLAN Bridging Example

netlab release 1.3 introduced support for VXLAN transport with static ingress replication and EVPN control plane. Last week we replaced a VLAN trunk with VXLAN transport, now we’ll replace static ingress replication with EVPN control plane.

Lab topology

Worth Reading: EVPN/VXLAN with FRR on Linux Hosts

Jeroen Van Bemmel created another interesting netlab topology: EVPN/VXLAN between SR Linux fabric and FRR on Linux hosts based on his work implementing VRFs, VXLAN, and EVPN on FRR in netlab release 1.3.1.

Bonus point: he also described how to do multi-vendor interoperability testing with netlab.

If only he wouldn’t be publishing his articles on a platform that’s almost as user-data-craving as Google.

… updated on Wednesday, September 28, 2022 17:22 UTC

Combining MLAG Clusters with VXLAN Fabric

In the previous MLAG Deep Dive blog posts, we discussed the innards of a standalone MLAG cluster. Now let’s see what happens when we connect such a cluster to a VXLAN fabric – we’ll use our standard MLAG topology and add a VXLAN transport underlay to it with another switch connected to the other end of the underlay network.

MLAG cluster connected to a VXLAN fabric

Repost: On the Viability of EVPN

Jordi left an interesting comment to my EVPN/VXLAN or Bridged Data Center Fabrics blog post discussing the viability of using VXLAN and EVPN in times when the equipment lead times can exceed 12 months. Here it is:

Interesting article Ivan. Another major problem I see for EPVN, is the incompatibility between vendors, even though it is an open standard. With today’s crazy switch delivery times, we want a multi-vendor solution like BGP or LACP, but EVPN (due to vendors) isn’t ready for a multi-vendor production network fabric.

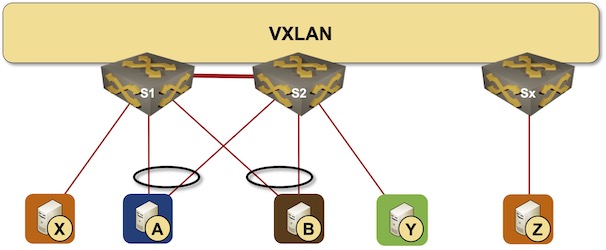

netlab VXLAN Bridging Example

netlab release 1.3 introduced support for VXLAN transport with static ingress replication. Time to check how easy it is to replace a VLAN trunk with VXLAN transport. We’ll use the lab topology from the VLAN trunking example, replace the VLAN trunk between S1 and S2 with an IP underlay network, and transport Ethernet frames across that network with VXLAN.

Lab topology

VXLAN-to-VXLAN Bridging in DCI Environments

Almost exactly a decade ago I wrote that VXLAN isn’t a data center interconnect technology. That’s still true, but you can make it a bit better with EVPN – at the very minimum you’ll get an ARP proxy and anycast gateway. Even this combo does not address the other requirements I listed a decade ago, but maybe I’m too demanding and good enough works well enough.

However, there is one other bit that was missing from most VXLAN implementations: LAN-to-WAN VXLAN-to-VXLAN bridging. Sounds weird? Supposedly a picture is worth a thousand words, so here we go.