Category: IPv6

IPv6 Addressing on Point-to-Point Links

One of my readers sent me this question:

In your observations on IPv6 assignments, what are common point-to-point IPv6 interfaces on routers? I know it always depends, but I’m hearing /64, /112, /126 and these opinions are causing some passionate debate.

(Checks the calendar) It’s 2023, IPv6 RFC has been published almost 25 years ago, and there are still people debating this stuff and confusing those who want to deploy IPv6? No wonder we’re not getting it deployed in enterprise networks ;)

First Steps in IPv6 Deployments

Even though IPv6 could buy its own beer (in US, let alone rest of the world), networking engineers still struggle with its deployment – one of the first questions I got in the ipSpace.net Design Clinic was:

We have been tasked to start IPv6 planning. Can we discuss (for enterprises like us who all of the sudden want IPv6) which design paths to take?

I did my best to answer this question and describe the basics of creating an IPv6 addressing plan. For even more details, watch the IPv6 webinars (most of them at least a few years old, but nothing changed in the IPv6 world in the meantime apart from the SRv6 madness).

Design Clinic: Small-Site IPv6 Multihoming

I decided to stop caring about IPv6 when the protocol became old enough to buy its own beer (now even in US), but its second-system effects keep coming back to haunt us. Here’s a question I got for the February 2023 ipSpace.net Design Clinic:

How can we do IPv6 networking in a small/medium enterprise if we’re using multiple ISPs and don’t have our own IPv6 Provider Independent IPv6 allocation. I’ve brainstormed this with people far more knowledgeable than me on IPv6, and listened to IPv6 Buzz episodes discussing it, but I still can’t figure it out.

… updated on Tuesday, June 13, 2023 16:00 UTC

State of LDPv6 and 6PE

One of my readers successfully deployed LDPv6 in their production network:

We are using LDPv6 since we started using MPLS with IPv6 because I was used to OSPF/OSPFv3 in dual-stack deployments, and it simply worked.

Not everyone seems to be sharing his enthusiasm:

Now some consultants tell me that they know no-one else that is using LDPv6. According to them “everyone” is using 6PE and the future of LDPv6 is not certain.

Video: IPv6 Traffic Filtering Details

Did you like the traffic filtering in the age of IPv6 video by Christopher Werny? Time for part two: IPv6 traffic filtering details.

SRv6 as a Host-to-Host Overlay

During the discussion of the On Applicability of MPLS Segment Routing (SR-MPLS) blog post on LinkedIn someone made an off-the-cuff remark that…

SRv6 as an host2host overlay - in some cases not a bad idea

It’s probably just my myopic view, but I fail to see the above idea as anything else but another tiny chapter in the “Solution in Search of a Problem” SRv6 saga1.

Video: Traffic Filtering in the Age of IPv6

Christopher Werny covered another interesting IPv6 security topic in the hands-on part of IPv6 security webinar: traffic filtering in the age of dual-stack and IPv6-only networks, including filtering extension headers, filters on Internet uplinks, ICMPv6 filters, and address space filters.

Video: Testing IPv6 RA Guard

After discussing rogue IPv6 RA challenges and the million ways one can circumvent IPv6 RA guard with IPv6 extension headers, Christopher Werny focused on practical aspects of this thorny topic: how can we test IPv6 RA Guard implementations and how good are they?

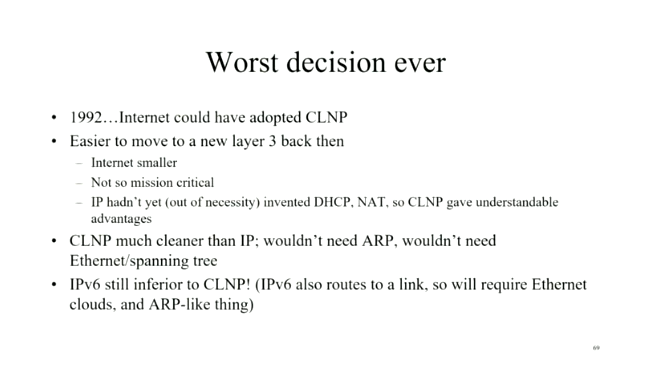

Was IPv6 Really the Worst Decision Ever?

A few weeks ago, Daniel Dib tweeted a slide from Radia Perlman’s presentation in which she claimed IPv6 was the worst decision ever as we could have adopted CLNP in 1992. I had similar thoughts on the topic a few years ago, and over tons of discussions, blog posts, and creating the How Networks Really Work webinar slowly realized it wouldn’t have mattered.

Worth Reading: Is IPv6 Faster Than IPv4?

In a recent blog post, Donal O Duibhir claims IPv6 is faster than IPv4… 39% of the time, which at a quick glance makes as much sense as “60% of the time it works every time”. The real reason for his claim is that there was no difference between IPv4 and IPv6 in ~30% of the measurements.

Unfortunately he measured only the Wi-Fi part of the connection (until the first-hop gateway); I hope he’ll keep going and measure response times from well-connected dual-stack sites like Google’s public DNS servers.

Video: IPv6 RA Guard and Extension Headers

Last week’s IPv6 security video introduced the rogue IPv6 RA challenges and the usual countermeasure – RA guard. Unfortunately, IPv6 tends to be a wonderfully extensible protocol, creating all sorts of opportunities for nefarious actors and security researchers.

For years, the networking vendors were furiously trying to plug the holes created by the academically minded IPv6 designers in love with fragmented extension headers. In the meantime, security researches had absolutely no problem finding yet another weird combination of IPv6 headers that would bypass any IPv6 RA guard implementation until IETF gave up and admitted one cannot have “infinitely extensible” and “secure” in the same sentence.

For more details watch the video by Christopher Werny describing how one could use IPv6 extension headers to circumvent IPv6 RA guard

Video: Rogue IPv6 RA Challenges

IPv6 security-focused presentations were usually an awesome opportunity to lean back and enjoy another round of whack-a-mole, often starting with an attacker using IPv6 Router Advertisements to divert traffic (see also: getting bored at Brussels airport) .

Rogue IPv6 RA challenges and the corresponding countermeasures are thus a mandatory part of any IPv6 security training, and Christopher Werny did a great job describing them in IPv6 security webinar.

IPv6 Unique Local Addresses (ULA) Made Useless

Recent news from the Department of Unintended Consequences: RFC 6724 changed the IPv4/IPv6 source/destination address selection rules a decade ago, and it seems that the common interpretation of those rules makes IPv6 Unique Local Addresses (ULA) less preferred than the IPv4 addresses, at least according to the recent Unintended Operational Issues With ULA draft by Nick Buraglio, Chris Cummings and Russ White.

End result: If you use only ULA addresses in your dual-stack network1, IPv6 won’t be used at all. Even worse, if you use ULA addresses together with global IPv6 addresses (GUA) as a fallback mechanism, there might be hidden gotchas that you won’t discover until you turn off IPv4. Looks like someone did a Truly Great Job, and ULA stands for Useless Local Addresses.

Video: Practical Aspects of IPv6 Security

Christopher Werny has tons of hands-on experience with IPv6 security (or lack thereof), and described some of his findings in the Practical Aspects of IPv6 Security part of IPv6 security webinar, including:

- Impact of dual-stack networks

- Security implications of IPv6 address planning

- Isolation on routing layer and strict filtering

- IPv6-related requirements for Internet- or MPLS uplinks

OMG: Hop-by-Hop Path MTU Discovery

Straight from the “Bad Ideas Never Die” (see also RFC 1925 Rule 11) department: Geoff Huston described a proposal to use hop-by-hop IPv6 extension headers to implement Path MTU Discovery. In his words:

It is a rare situation when you can create an outcome from two somewhat broken technologies where the outcome is not also broken.

IETF should put rules in place similar to the ones used by the patent office (Thou Shalt Not Patent Perpetual Motion Machine), but unfortunately we’re way past that point. Back to Geoff:

It appears that the IETF has decided that volume is far easier to achieve than quality. These days, what the IETF is generating as RFCs is pretty much what the IETF accused the OSI folk of producing back then: Nothing more than voluminous paperware about vapourware!