The Lack of Historic Knowledge Is so Frustrating

Every time I’m explaining the intricacies of new technologies to networking engineers, I try to use analogies with older well-known technologies, trying to make it simpler to grasp the architectural constraints of the shiny new stuff.

Unfortunately, most engineers younger than ~35 years have no idea what I’m talking about – all they know are Ethernet, IP and MPLS.

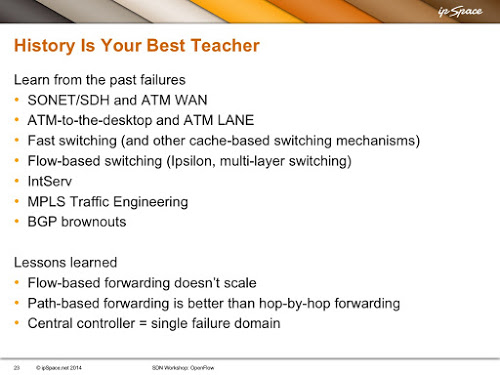

Just to give you an example – here’s a slide from my SDN workshop. Anyone familiar with the technologies I listed on the slide immediately grasps the limitations of various SDN approaches.

How many people still remember those technologies? They are dead for a good reason, but unless we know what the reason is, we keep reinventing them after a decade or two.

Want a few more examples? I was trying to explain the principles of SAN and differences between FC and FCoE to a networking engineer a while ago. I started with B2B credits in FC and told him Fibre Channel uses exactly the same approach as hop-by-hop windows we had in X.25, with the same results… resulting in confused blank stare. Further down the conversation I said FCoE uses lossless Ethernet, which uses PAUSE frames, which are exactly the same thing as Ctrl-S and Ctrl-Q on an async link. Same result.

In short, the lack of historical knowledge I see in younger engineers is depressing, and it’s obvious when looking at many SDN ideas or even products that “Those who fail to learn from history are doomed to repeat it”, but I simply don’t know how to fix it – it’s so hard to motivate young people to learn about seemingly irrelevant stuff. Any ideas are highly appreciated.

However, there is something you can do – always ask why do we do things the way we do them and understanding the fundamental principles instead of focusing on the intricacies of the configuration commands. Study old technologies and try to grasp why we no longer use them, because RFC 1925 section 2.11 remains as relevant as ever.

While I've never touched Decnet, Appletalk or IPX (except on home PC) I try to keep aware of what existed in the past. Even I can see that history is repeating itself and many of the SDN startups are repeating the most basic mistakes of the past...

(PSTN is still more reliable than 3G for OOB, assuming you test it often - anybody got a good automated test script?).

Now that I think about that, I started with ATDP :D... and I'm positive there's AT command set hidden within every 3G modem (in the good old days you could use it to send SMS messages).

George Bernard Shaw

I thought MPLS TE did the right things ? It aggregates small flows into very big flows. The tunnels are set up only once, not dynamically based on traffic. You configure tunnels only where you need them, not everywhere in the network. It got deployed. So why did it fail ? Did people stop using it ? Is it too complex to configure ? To troubleshoot ? Not worth the effort ? Curious.

The real problem of MPLS TE is that it's neigh impossible doing it right without central visibility. Here's a pretty good talk outlining the challenges: https://ripe64.ripe.net/archives/video/23/

Also, see knapsack problem (which is NP-complete when faced with the right question even in a non-distributed form).

What you (probably) wanted to say is that we have good-enough approximations that get results in reasonable time.

You either invest in learning about the old failures, or learn the hard way why that old stuff failed (without ever realizing the significance of that failure) when the shiny new stuff breaks down ;)

http://www.daedtech.com/tag/expert-beginner

Make it as a base for each of the roadmaps, and include it in the "free" tier as well.

(This, of course, assumes it's not already there and I missed it :-/ )

Got the message ;)... and no, it's not there yet.

Best,

Ivan

I can't tell you how much I "appreciate" anonymous comments with vague unsubstantiated claims based on misunderstanding what I wrote or implying what I didn't write.

In any case, should you decide to use your real name and focus on facts, we could have a nice civilized discussion.

Kind regards,

Ivan

Just because something failed commercially doesn't necessarily mean that what we should learn from that failure is that the technique itself was flawed. I could probably form cogent arguments with each of your lessons learned as to why that lesson should be unlearned if I were in the mood to be a cantankerous old man.

We could easily start listing great ideas, from CLNS per-host addressing (I still think it was a good idea) and OSI session layer (see http://blog.ipspace.net/2009/08/what-went-wrong-tcpip-lacks-session.html) to VAX/VMS operating system or Digital's RDB database or Banyan Vines directory service... but that wasn't the point.

I am positive that all things I listed on that slide deserve to be there, and I could add a few more (like X.25). Some of them were successful because they solved problems we don't have anymore (very high error rate) or problems people thought needed solving (ATM QoS).

In any case, we should understand what their shortcomings were, so that when someone tries to reinvent the technologies we don't have to rediscover the shortcomings. Agreed?

As for the "lessons learned", I would love to hear counter-arguments.

Kind regards,

Ivan

Lessons learned... I think that #1 and #3 would be essentially semantic arguments and, while they might make for a wonderful conversation at the bar, are not terribly interesting on the Internet ( define "scale" in flow-based systems, for example ). So let's talk about #2.

There are semantics in play here as well, but I submit that the most evident reason hop-by-hop fails that I have encountered is the complexities involved in device administration rather and information security than in any inherent technical shortcoming in the _idea_ that all the information needed for forwarding is carried within the packet. Following instructions in a label or header is simple, making sure that the rules about what that label or header _mean_ to the follower are consistent and trustworthy is hard.

So, while there is a long and not particularly distinguished list of failed attempts to do this, the ones that I can think of failed because we weren't ready with the security and administration apparatus, not because they didn't actually work well or scale well.

And, on the flip side, by using path based forwarding to defer dealing with those security and administration shortfalls we just kicked the can down the road for a generation.

Now we have examples of how to do ad-hoc tamper prevention and hyper-scale distributed node administration in real world situations, so it is perhaps reasonable that you could, for example, actually use IPv6+AH+hop-by-hop options and expect it would work. That is something of an ideal world argument, because it wouldn't work at all because we've all set our devices to ignore options because we can barely agree on how to propagate routes in a trustworthy and non-destructive manner, much less how to trust the rich end of the IP header.

My point is that calling one better than the other is subjective when the "better" created a culture that is so afraid of a problem that they reject the solution.

e.g. Apple's Newton -> Apple iPhone would be one

This produces swarms of "vendor symbiotes", which fit nicely into the vendors marketing strategy. In the end if this is mostly what is available for hire it is "fait accomplis".

Having come from the days of running IPX, IP, DECNET, LAT, VINES, 3270 Terminals, Frame Relay, ATM, etc all on the same network it is entertaining to deal with the symbiotes.

Not sure if there is a single version of the technology failure history;)

The situation with networking technologies today is highly analogous to the situation with automobile mechanics - it's only a tiny, shrinking percentage of aging practitioners who actually understand how the stuff works, the rest are just break/fix 'technicians' who aren't called to a vocation, but rather schlepping through a job.

Some fundamental ideas are the same in many areas of Computer Science (QoS vs job handling batch processing during the mainframe days - just to cite Comer's podcast).

I believe in smart people - every time I have difficult problem to solve, I simply approach very smart guy and ask for help. Many times the guy does not know the particular area I am working on. And it works - he/she can help just thinking differently (sees bits instead of adresses - citing the Comer again).

Some people are simply bright. Some know a lot (also know history) and have some advantage over other. Not always they are the same people. We need both.

You can learn the history (but the industry does not care if the previous attempt of using the idea was a failure) but it's difficult to fight brain limits.

The tradeoffs are that it raises the learning complexity so it increases effort and time (more compute) :)

I come from the world of x21, x25, PDH, SDH and WDM,- I kind of like this very rigid world of rules,- even after many years of Ip and routers.

In old days we tested a STM-16 (eq 2.5G) system fully cascade wired for 2-3 week, kicking it, slamming the doors, jerking fibers/cables, connectors etc. Not a single bit error was accepted.

Now we send 10 pings ... only 2 dropped? Perfect job!!

What will the international network world look like in 20-30 years? I believe somehow in the upcoming SDN philosophy. Centralized control and configuration with end-to-end perspective.

No need for each L3 device making it's own decisions.

Could we imagine Tier1 providers having "major" SDN policies,- instructing tier2 SDN controllers ... to tier3 SDN controllers ... etc?

Just thinking .... good old hierarchical and efficient thinking applied to ip traffic?

& every now & again I feel a certain sense of pride when I'm called to implement or troubleshoot ATM or Frame Relay because no one else knows how to do it :-)

If you believe that new is always better ( bad marketing hype ) than why bother remembering the past ? It's not only in protocols btw. In some firms it's also how they manage information about N-1 and N-2 products.