Data Center Protocols in HP Switches

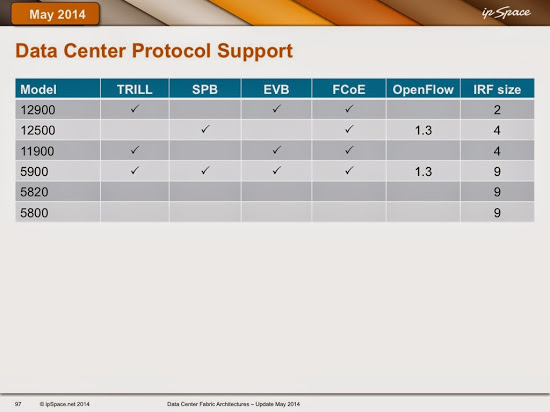

HP representatives made some pretty bold claims during Networking Tech Field Day 1, including “our switches will support EVB, FCoE, SPB and TRILL.” I took them three years to deliver on those promises (and the hardware they had at that time doesn’t exactly support all features they promised), but their current protocol coverage is impressive.

A few comments:

- 5900 and 5920 seem to be the only ToR switches HP really invests in. They are also the only true 10GE ToR switches in HP portfolio;

- It looks like they didn’t manage to implement TRILL on the 12500. TRILL requires new encapsulation scheme while SPB uses 802.1ad (Q-in-Q) or 802.1ah (MAC-in-MAC) that were available in merchant silicon for years;

- I’m baffled by lack of EVB on 12500. After all, EVB is a pure control-plane technology (data plane uses 802.1Q or 802.1ad). On the other hand, 12500 is not exactly an edge switch, so one wouldn’t expect to see hypervisor hosts connected directly to a 12500;

- It seems HP decided TRILL is the way to go, and implemented SPB just to keep the existing owners of 12500 switches happy. The newer switches (12900 and 11900) don’t support SPB, even though I’m positive their silicon supports 802.1ah (it’s hard to get merchant silicon that doesn’t support 802.1ah these days);

- 5900 supports SPB and TRILL to work with the old and the new core switches.

- Don’t expect to add new core switches to an existing 12500 core – mixing SPB and TRILL in a single L2 fabric would be great fun (and fantastic job security).

One important thing should be mentioned is that HP devices in SPB or TRILL mode cannot route traffic. In design you have to make additional devices for routing between VLANs. In one of my project I had to refuse from using either of these technologies. Another thing that I do not like in HP solution is that they do not have anycast FHRP. Extended protocol of VRRP is almost like GLBP works, you have different MAC addreses for different VRRP gateways.

About HP VRRP load-balancing mode: http://networkgeekstuff.com/networking/h3c-proprietary-vrrp-load-balancing/

A5900: MPLS/VPLS support

A5800: MPLS/VPLS support, no Openflow at all

A5820: no MPLS/VPLS, no Openflow at all

A5500-HI: MPLS/VPLS support, Openflow 1.3

A5500-EI: no MPLS/VPLS support, but Openflow 1.3

But talking about the 5900. If I configure TRILL, I cannot do routing on other interfaces anymore?

In terms of solutions offerings, HP offers SPB and TRILL according to the IETF and IEEE standards. I don't see a problem in terms of which platform supports which protocol, they support it. If you want SPB, go for the 12500, if you want TRILL go for the 12900.

As for Anycast VRRP, HP solutions support VRRPe which allows for load balancing. And there is an even better solution which is IRF which is a consistent stacking technology throughout the whole HP Comware product portfolio.

With regards to SPB support, any switch that supports QinQ (MacinMac) can potentially support SPB. This is the case for the 11900 and 12900. Whether it is going to be supported is dependent on customer requirements. Because code is unified (Comware 7) it is not a big deal to build the features into other platforms.

Rest assure that HP has a complete and compelling product and solution portfolio with a huge range of functionality, protocol support and features.

First of all, thanks for confirming that there really is L2-only limitation in HP's SPB/TRILL deployments.

As for VRRP in IRF, we both know that IRF has the same limitations as any other shared-control-plane architecture, and supports limited number of core bridges in an IRF cluster.

Finally, based on your last paragraph, I would guess that you work for HP or one of its partners, in which case it would be fair to everyone else to disclose it.

Kind regards,

Ivan

With regards to Ales' response, the reason for the limitation is the chipset (Trident II), so all vendors that use this chipset have the same issue. The main reason for this limitation is that an incoming packet can be processed once by the ASIC. This means that if TRILL encapsulation has to take place, the TRILL encapsulated packet leaves the ASIC and L3 cannot be done anymore by that ASIC. Some vendors solve this by sacrificing other ASICs or doing traffic redirection or physical loopbacks. At this moment this is also HP's solution. As said, HP is working on another solution that alleviates these requirements and you can run L2/L3 within the fabric on the standard hardware. The solution will most likely be a software upgrade only.

WIth IRF you can already build some pretty high density cores. For example, on the 12518 you can build a 4 switch IRF providing 4 x 18 x 48 = 3456 10 Gbps ports (subtract some for IRF). Although IRF does have a master/slave relationship for the control plane, each Management module is fully synchronized with the master, so if the master fails there is an instant failover. In addition to that, IRF is a fully distributed L3 architecture, so each device in the IRF takes care of its own routing on the ASIC. So, I would not compare IRF with any other shared control plane architecture like VPC or VSS.

The other good thing is that you can still combine IRF with fabric technologies like SPB or TRILL. And that allows you to create huge fabrics consisting of different IRF's.

And yes, I work for HP. This means that I cannot share all information but in general I can say that if there is a demand for specific functionality HP can build it. I think the main question is whether there is actually a need for all these fabric technologies in the Datacenter. I can imagine that in some situations there is but we should not consider these technologies as the holy grail and deploy it just for the sake of it.

It's all about listening to what the customer really needs. I know this is a cliche but unfortunately, I see this happening with customers all the time (making things overcomplicated).

Just my 2 cents.

To my understanding the limitation is that a port can either be a TRILL port without IP routing or a routed port without TRILL. This limitation shall be common to all other vendors (i.e. Cisco Nexus 5672-UP) relying on Broadcom's Trident II chipset in their boxes. HP seem to be able to do routing via loopback interface(s), but it may not be the recommended way. The recommendation is to use a routing leaf/ToR.

Wouldn't it be great that a leaf switch can decide at ingress whether a packet is a L2 (TRILL) or L3 packet?

Not sure what Broadcom can do on TRILL today (recent chipsets can do RIOT with VXLAN), but the original 5900 definitely couldn't do it.

-More limitations?

=> http://www.h3c.com/portal/download.do?id=1824805

"The forwarding capabilities of TRILL for these packets on the S5820V2 & S5830V2 Switch Series are restricted

by the following resources:

• In an IRF fabric formed by S5820V2 & S5830V2 Switch Series, both TRILL-encapsulated packets and

non-TRILL-encapsulated packets must pass through the IRF port when the following conditions exist:

.. The IRF fabric receives these packets and uses TRILL to forward these packets.

.. These packets need to be forwarded through both a TRILL trunk port and a TRILL access port.

As a result, the total rate of these TRILL packets cannot exceed half the bandwidth of the IRF port.

• These packets processed by TRILL must be processed by the internal loopback interface. As a result, the total

rate of these packets cannot exceed the bandwidth of the internal loopback interface.

Limited by the two resources above, the forwarding capabilities of TRILL for these packets are as follows:

• On S5820V2-52QF and S5820V2-52Q switches, the total rate of these packets cannot exceed 4 Gbps.

• On S5830V2-24S and S5820V2-54QS-GE switches, the total rate of these packets cannot exceed 8 Gbps."

It seems that Avaya is the only vendor that does L2 and L3 on their SPB switches. But they have a proprietary - excuse me: pre-standard - approach. How would you rate Avaya in comparison to HP?

HP reps told me before the project to use SPB so they are indeed recommending it.