Category: networking fundamentals

Integrated Routing and Bridging (IRB) Design Models

Imagine you built a layer-2 fabric with tons of VLANs stretched all over the place. Now the users want to exchange traffic between those VLANs, and the obvious question is: which devices should do layer-2 forwarding (bridging) and which ones should do layer-3 forwarding (routing)?

There are four typical designs you can use to solve that challenge:

- Exchange traffic between VLANs outside of the fabric (edge routing)

- Route on core switches (centralized routing)

- Route on ingress (asymmetric IRB)

- Route on ingress and egress (symmetric IRB)

This blog post is an overview of the design models; we’ll cover each design in a separate blog post.

Multihoming Cannot Be Solved within a Network

Henk made an interesting comment that finally triggered me to organize my thoughts about network-level host multihoming1:

The problems I see with routing are: [hard stuff], host multihoming, [even more hard stuff]. To solve some of those, we should have true identifier/locator separation. Not an after-thought like LISP, but something built into the layer-3 addressing architecture.

Proponents of various clean-slate (RINA) and pimp-my-Internet (LISP) approaches are quick to point out how their solution solves multihoming. I might be missing something, but it seems like that problem cannot be solved within the network.

Video: Routing Protocols Overview

After discussing network addressing and switching, routing, and bridging in the How Networks Really Work webinar, it was high time for a deep dive into routing protocols, starting (as always) with an overview.

Video: Bridging Beyond Spanning Tree

In this week’s update of the Data Center Infrastructure for Networking Engineers webinar, we talked about VLANs, VRFs, and modern data center fabrics.

Those videos are available with Standard or Expert ipSpace.net Subscription; if you’re still sitting on the fence, you might want to watch the how networks really work version of the same topic that’s available with Free Subscription – it describes the principles-of-operation of bridging fabrics that don’t use STP (TRILL, SPBM, VXLAN, EVPN)

Linux Networking Data Plane Configuration

I spent a rainy day implementing VLANs, VRFs, and VXLAN on Cumulus Linux VX and came to “appreciate” the beauties of Linux networking configuration.

TL&DR: It sucks

There are two major ways of configuring data plane constructs (interfaces, port channels, VLANs, VRFs) on Linux:

The Basics of Network Address Translation (NAT)

The last video in the 2-hour-long Network Addressing part of How Networks Really Work discusses Network Address Translation.

After watching it, you might want to spend some extra quality time (with a bit of soap opera vibe) enjoying the recent Dual ISP deployment operational issues and uncertainties thread on the v6ops mailing list with a “surprising” result: NPTv6 or NAT66 is the least horrible way to do it.

VLAN Interfaces and Subinterfaces

Early bridges implemented a single bridging domain across all ports. Within a few years, we got multiple bridging domains within a single device (including bridging implementation in Cisco IOS). The capability to have multiple bridging domains stretched across several devices was still missing… until the modern-day Pandora opened the VLAN box and forever swamped us in the complexities of large-scale bridging.

From Bits to Application Data

Long long time ago, Daniel Dib started an interesting Twitter discussion with this seemingly simple question:

How does a switch/router know from the bits it has received which layer each bit belongs to? Assume a switch received 01010101, how would it know which bits belong to the data link layer, which to the network layer and so on.

As is often the case, Peter Paluch provided an excellent answer in a Twitter thread, and allowed me to save it for posterity.

How Routers Became Bridges

Network terminology was easy in the 1980s: bridges forwarded frames between Ethernet segments based on MAC addresses, and routers forwarded network layer packets between network segments. That nirvana couldn’t last long; eventually, a big-enough customer told Cisco: “I don’t want to buy another box if I already have your too-expensive router. I want your router to be a bridge.”

Turning a router into a bridge is easier than going the other way round1: add MAC table and dynamic MAC learning, and spend an evening implementing STP.

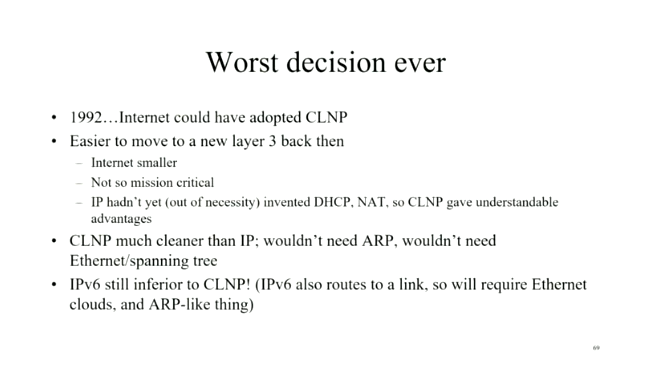

Was IPv6 Really the Worst Decision Ever?

A few weeks ago, Daniel Dib tweeted a slide from Radia Perlman’s presentation in which she claimed IPv6 was the worst decision ever as we could have adopted CLNP in 1992. I had similar thoughts on the topic a few years ago, and over tons of discussions, blog posts, and creating the How Networks Really Work webinar slowly realized it wouldn’t have mattered.

Router Interfaces and Switch Ports

When I started implementing the netlab VLAN module, I encountered (at least) three different ways of configuring physical interfaces and bridging domains even though the underlying packet forwarding operations (and sometimes even the forwarding hardware) are the same. That confusopoly is guaranteed to make your head spin for years, and the only way to figure out what’s going on behind the scenes is to go back to the fundamentals.

When You Find Yourself on Mount Stupid

The early October 2021 Facebook outage generated a predictable phenomenon – couch epidemiologists became experts in little-known Bridging the Gap Protocol (BGP), including its Introvert and Extrovert variants. Unfortunately, I also witnessed several unexpected trips to Mount Stupid by people who should have known better.

To set the record straight: everyone’s been there, and the more vocal you tend to be on social media (including mailing lists), the more probable it is that you’ll take a wrong turn and end there. What matters is how gracefully you descend and what you’ve learned on the way back.

Video: Network Address Scopes

When defining network addresses in IEN 19 John Shoch said:

Addresses must, therefore, be meaningful throughout the domain, and must be drawn from some uniform address space.

But what is a domain? Welcome to the address scope discussion ;)

Video: Network Address Assignments

The last part of the Network Addressing section of How Networks Really Work webinar covered other addressing-related topics starting with address assignment mechanisms.

Video: Combining Data-Link- and Network Layer Addresses

The previous videos in the How Networks Really Work webinar described some interesting details of data-link layer addresses and network layer addresses. Now for the final bit: how do we map an adjacent network address into a per-interface data link layer address?

If you answered ARP (or ND if you happen to be of IPv6 persuasion) you’re absolutely right… but is that the only way? Watch the Combining Data-Link- and Network Addresses video to find out.