Does FCoE need QCN (802.1Qau)?

One of the recurring religious FCoE-related debates of the last months is undoubtedly “do you need QCN to run FCoE” with Cisco adamantly claiming you don’t (hint: Nexus doesn’t support it) and HP claiming you do (hint: their switch software lacks FC stack) ... and then there’s this recent announcement from Brocade (more about it in a future post). As is usually the case, Cisco and HP are both right ... depending on how you design your multi-hop FCoE network.

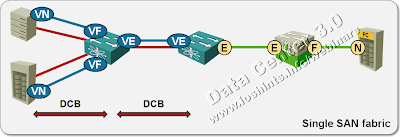

According to Cisco, every major switch in your network should also be an FCoE Forwarder (FCF; FC functionality somewhat equivalent to a router) running full FC stack, having its own FC domain ID and participating in the FSPF. There’s no bridging in this scenario. FC frames are routed hop-by-hop and QCN is simply not applicable (it works only across a single bridged domain). The fact that NX-OS has FC stack and that Nexus hardware does not support QCN might have biased the design a bit.

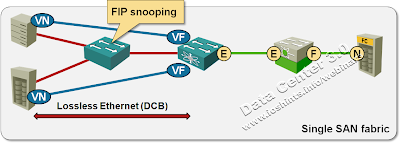

Another design favored by Cisco uses a single DCB-enabled access switch (Nexus 4000, for example) between the hosts and FCFs. QCN might be marginally beneficial in this scenario, but as traditional FC networks have worked quite well with BB_credits, PFC will probably do a decent job here as well.

In this design, the access switch has to support PFC (ETS is recommended) but not QCN. Cisco is also telling you the access switch simply has to have FIP snooping. Nothing to do with the fact that Cisco has generic snooping hardware and some other vendors don’t.

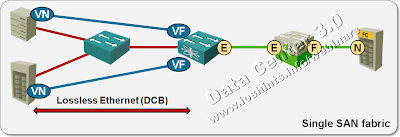

The vendors that don’t have FC stack in their core and aggregation switches usually keep mum about FCoE. When prodded, they might tell you it’s a legacy migration technology and you should really be using iSCSI or NFS, or they claim they’ll support it once QCN chips are available, because you really need QCN for multihop FCoE ... and they’re also right. If you have FCoE Enodes and FCFs at the edges of a large LAN (with a lot of DCB-enabled aggregation/core switches in the middle), it’s quite possible to get congestion somewhere in the core and QCN is the only thing that can help you.

Continuing the same debate, Cisco will be quick to point out that the FCoE-on-the-edges design loses all traffic engineering capabilities, most of security and multipath separation of traditional FC networks ... and so we’re going around in circles. Maybe it’s not a bad idea to be cautious for the next few years, use FCoE only as a feeder technology into legacy FC network and prepare to migrate to iSCSI or NFS.

More information

- Joe Onisick wrote a great article “What’s the deal with Quantized Congestion Notification (QCN)”.

- You can enjoy vendor bantering in comments to the “When are FCoE standards done” post on Cisco Blog (or you could follow @bradhedlund on Twitter, he’s quick to jump into QCN/FCoE debates).

- The Data Center 3.0 for Networking Engineers webinar (buy a recording or yearly subscription) will give you a comprehensive overview of numerous Data Center technologies, including FCoE, multihop FCoE and DBC (PFC, ETS and QCN).

http://www.networkcomputing.com/data-networking-management/brocade-first-to-market-with-native-end-to-end-fcoe.php

FCoE = Buggy Whips.

A technology so mindboggingly complicated that marketing people can argue over competing claims and all be correct.

Customers and end users should see FCoE for what it is - a product that allows vendors to overcharge for a supposedly new technology while a perfect technology in NFS or iSCSI is available.

Storage people should not be deceived by posturing from Brocade and Cisco and research the technology and realise that FCoE is dead end technology for legacy migration only.