Does a Cloud Orchestration System Need an Underlying SDN Controller?

A while ago I had an interesting discussion with a fellow SDN explorer, in which I came to a conclusion that it makes no sense to insert an overlay virtual networking SDN controller between cloud orchestration system and virtual switches. As always, I missed an important piece of the puzzle: federation of cloud instances.

2014-11-04 16:48Z: CJ Williams sent me an email with information on SDN controller in upcoming Windows Server release. Thank you!

To Control or Not To Control?

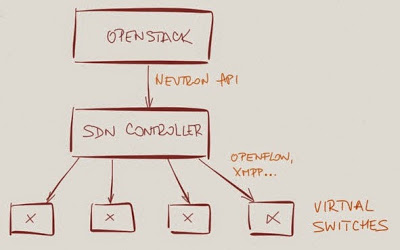

Most overlay virtual networking solutions use a network controller (mandatorily called SDN controller in these days of SDN hype) that interacts with the cloud orchestration system through an API (example: Neutron API).

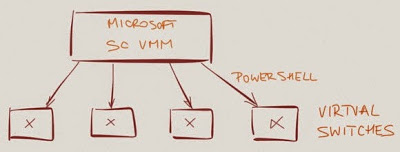

Microsoft is the only exception I’m aware of: their cloud orchestration system installs forwarding information directly into the Hype-V extensible virtual switches use PowerShell commandlets.

Microsoft is adding SDN controller functionality in upcoming Windows Server release. See Next Generation Networking in the Next Release of Windows Server and other videos for TechEd Europe 2014 for more details.

There might be use cases where one needs a dedicated SDN controller (example: NEC’s ProgrammableFlow controller manages flows across physical and virtual switches), but unless one seeks a tight physical-to-virtual integration (which is not exactly a good idea), the SDN controller seems superfluous. After all, all we need to do is to install forwarding information (MAC-to-VTEP, IP-to-VTEP, ARP and IP routing entries) into the soft switches.

Looking at a bigger picture, we don’t have dedicated compute or storage controllers either (unless some marketer with too much time on his hands decides to rebrand vCenter), and while it’s true that virtual networking remains more complex than storage or compute virtualization, I still wasn’t persuaded that there’s a good reason to introduce a dedicated network controller.

What about hybrid clouds?

Cloud orchestration system is supposed to be the ultimate source of truth: it knows all about virtual machines (or containers), their IP and MAC addresses, tenant subnets, security rules, and maybe even load balancing rules.

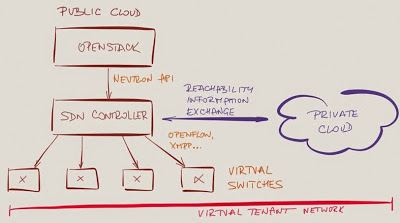

This paradigm starts breaking down (at least on the virtual network layer) in hybrid cloud environments – virtual machines managed by a cloud orchestration system have to communicate with non-managed endpoints in the same tenant network. Wouldn’t it be great to have an SDN controller that would communicate with tenant networks and exchange reachability information with them?

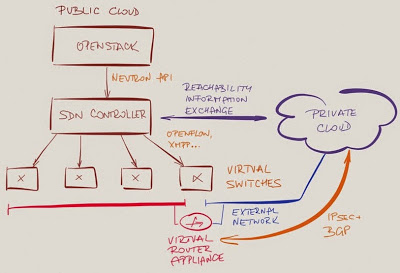

Not necessarily – think about the security implications of having your SDN controller talking directly with the customers. Several vendors avoid this particular can of worms by running a routing instance as a virtual appliance in the tenant space.

Hyper-V uses default routing toward that appliance (a quick-and-efficient solution that introduces a single point of failure), VMware NSX Edge Services Router exchanges routing information with NSX controller which then distributes it across virtual switches. In both cases, the tenants have no direct access to the SDN controller or cloud orchestration system.

Conclusion: there’s no pressing need to introduce an SDN controller to support hybrid clouds.

Federated clouds

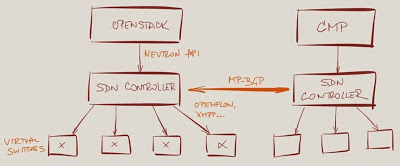

There is another use case where a cloud orchestration system is not good enough: federated cloud instances of the same private or public cloud. These instances have to have end-to-end reachability information to implement virtual tenant networks spanning more than one availability zone or region, and because they implicitly trust each other it makes perfect sense to implement this requirement with network controllers running some sort of distributed eventually consistent reachability information dissemination scheme (also known as a routing protocol).

Smart overlay virtual networking vendors focusing on large-scale deployments quickly realized the benefits of reusing existing field-tested protocols: Nuage Networks VSP, Juniper Contrail and recently Cisco Nexus 1000V use MP-BGP (VPNv4 or EVPN address families) to distribute reachability information.

VMware NSX and Microsoft Hyper-V currently have no equivalent solution. Their networking solutions are limited to a single cloud instance and rely on tenant-space appliances to stretch virtual networks across multiple instances – an architecture functionally equivalent to MPLS/VPN Inter-AS Option A (and we all know how well that one scales).

Layering and Packaging

Finally, there’s another reason to interpose an SDN controller between cloud orchestration system and virtual switches: product packaging. It’s easier to package overlay virtual networking functionality into a standalone controller and interact with multiple cloud orchestration systems through a thin layer of glue (networking plugins like Neutron).

1 comments: