Detect short bursts with EEM

Last week I’ve described how you can use EEM to detect long-term interface congestion which could indicate denial-of-service attack. The mechanism I’ve used (the averaged interface load) is pretty slow; using the lowest possible value for the load-interval (30 seconds) it takes almost a minute to detect a DOS attack (see below).

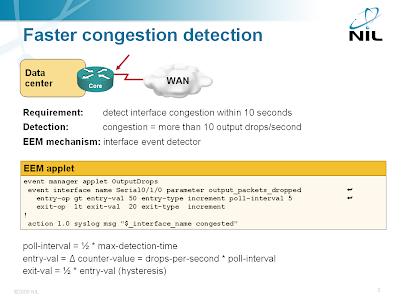

If you want to detect outbound bursts, you can do better: you can monitor the increase in the number of output drops over a short period of time.

Obviously you cannot use this mechanism to detect inbound (potentially DOS-related) floods as the drops occur on the Service Provider’s edge router.

Various polling and averaging options, including in-depth discussion of hysteresis, are covered in the Embedded Event Manager (EEM) workshop. You can attend an online version of the workshop or we can organize a dedicated event for your networking team.

More details: Interface load increases slowly after a flood starts

The interface input/output rates in bps or pps are computed as weighted averages using a sampling interval configured with load-interval command. To measure the delay between the start of a UDP burst and the denial-of-service alert generated by EEM (which is triggered by output bps rate exceeding ~80% of configured interface bandwidth), I’ve configured an access-list that reported the start of the burst and attached it to the outgoing interface:

ip access-list extended UDP

permit udp any any gt 0 log

permit ip any any

!

interface Serial0/1/0

bandwidth 1000

ip address 10.0.1.1 255.255.255.252

ip access-group UDP out

encapsulation ppp

load-interval 30

It took the router almost a minute to report the interface saturation when the EEM applet was triggered by the TXload interface variable:

rtr#

00:27:38: %SEC-6-IPACCESSLOGP: list UDP permitted udp

10.0.0.10(1070) -> 10.0.20.3(5002), 1 packet

00:28:25: %HA_EM-6-LOG: IntOverload: Interface Serial0/1/0

overloaded: txload = 209

The OutputDrops EEM applet which relies on the output_packets_dropped variable detected the flood within 10 seconds.

* You have to monitor an SNMP OID which is hard to construct (the MQC MIB is complex)

* The MIB variables are updated only once every 10 seconds.

See also:

http://wiki.nil.com/Class-based_QoS_MIB_indexes

http://blog.ioshints.info/2008/12/this-is-qos-who-cares-about-real-time.html